- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I'm solving a large complex dense matrix (10k*10k) in Csharp by calling zgetrf first to LU-decompse the matrix. If I understand correctly, zgetrf will do a in-place LU-decomposition. So there should be no extra copies produced. But the memory usage becomes doubled (from 1.6G to more than 3G) when zgetrf is running. Can someone kindly tell me the reason? It would be great if I can figure out a solution to avoid the extra memory usage.

Here is the code

class Program

{

static void Main(string[] args)

{

int n1 = 10000;

int n2 = n1 * n1;

complex[] A = new complex[n2];

complex[] b = new complex[n1];

for (int i = 0; i < n1; i++)

{

for (int j = 0; j < n1; j++)

{

int ij = j * n1 + i;

A[ij].r = i + 1;

A[ij].i = j + 1;

}

b.r = i + 1;

b.i = i + 2;

}

int info = 0;

int[] ipiv = new int[n1];

MKL.LU_decomposition(A, ref n1, ref n2, ipiv, ref info);

MKL.LU_solve(A, b, ref n1, ref n2, ipiv, ref info);

}

[SuppressUnmanagedCodeSecurity]

public sealed class MKL

{

private MKL() { }

[DllImport("customMKL_Sequential.dll", CallingConvention = CallingConvention.Cdecl, ExactSpelling = true, SetLastError = false)]

public static extern void LU_decomposition([In, Out] complex[] A, ref int n1, ref int n2, [In, Out] int[] ipiv, ref int info);

[DllImport("customMKL_Sequential.dll", CallingConvention = CallingConvention.Cdecl, ExactSpelling = true, SetLastError = false)]

public static extern void LU_solve([In] complex[] A, [In, Out] complex[] b, ref int n1, ref int n2, [In] int[] ipiv, ref int info);

}

[StructLayout(LayoutKind.Sequential)]/* Force sequential mapping */

public struct complex

{

[MarshalAs(UnmanagedType.R8, SizeConst = 64)]

public double r;

[MarshalAs(UnmanagedType.R8, SizeConst = 64)]

public double i;

};

}

subroutine LU_decomposition (A, n1, n2, ipiv, info)

implicit none

!dec$ attributes dllexport :: LU_decomposition

!dec$ attributes alias:'LU_decomposition' :: LU_decomposition

integer, intent(in) :: n1, n2

integer, intent(inout) :: info

integer, dimension(n1), intent(inout) :: ipiv

complex(8), dimension(n2), intent(inout) :: A

call zgetrf(n1, n1, A, n1, ipiv, info)

end subroutine

subroutine LU_solve (A, b, n1, n2, ipiv, info)

implicit none

!dec$ attributes dllexport :: LU_solve

!dec$ attributes alias:'LU_solve' :: LU_solve

integer, intent(in) :: n1, n2

integer, intent(inout) :: info

integer, dimension(n1), intent(in) :: ipiv

complex(8), dimension(n2), intent(inout) :: A

complex(8), dimension(n1), intent(inout) :: b

call zgetrs('N', n1, 1, A, n1, ipiv, b, n1, info)

end subroutine

Thanks alot in advance

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

This looks an interesting topic. I don’t know the root cause of the problem. But I did some investigations to provide some other tips. I run two programs and monitor the memery usage:

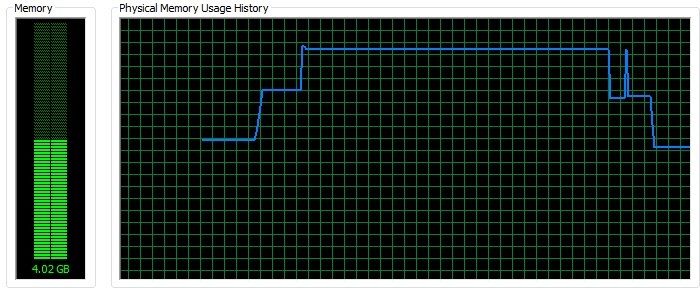

- CSharp application (exe) calling Fortran dll (same with Yi L.). See usage history. The first step is when the matrix and vector are allocated, and the next step starts when executing the LU decomposition.

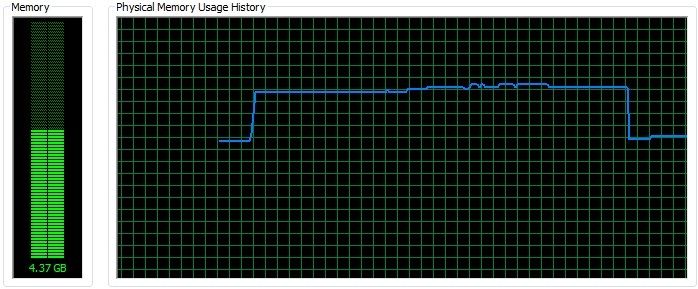

- Fortran application (exe) calling Fortran dll (same data with the CSharp one). See the memory usage history. There is no extra memory usage when it starts to execute the LU decomposition.

So I guess the problem might comes from passing data by crossing platform (from managed to native). Hope to get detailed explanation from experts in this forum.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, Tao Yu,

Thanks for the sharing. I verify with MKL developers, the function ZGETRF and ZGETRS only, use very low extra memory, about few dozens of megabytes. So the issue might be related to Marshalling.

Best Regards,

Ying

P.s i should seen the kind of report some places, but can't find it, you may search in the forum. It should not related, but you can try to add mkl_thread_free_buffers() in your code and see if there is any changes. )

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page