- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello,

I'm trying to run the R-benchmark-25.R using automatic offloading to the Xeon Phi but AO is not working.

I have compiled my R library as explained here and set the env variables (MIC_ENABLE=1 and different values for MKL_HOST_WORKDIVISION, MKL_MIC_0_WORKDIVISION, and , MKL_MIC_1_WORKDIVISION).

When running the benchmark, I've checked with micsmc that no code is being offloaded to any of the mics. I guess it is not a system configuration problem because the example sgemm.c from the compiler runs just fine and offloads parts of the code.

I've also tried&checked the following:

- Force all work to be run on on mic (setting the workdivision variables).

- Increase the size of the variables in the benchmark.

- The executable is linked to MKL libraries

The sw/hw versions that I'm using:

- Intel composer_xe_2013.3.163

- MPSS 3.4.1

- CentOS 6.4

- two Xeon Phi 5110P

- R 3.1.2

Thank you very much for your help,

Sabela Ramos.

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sabela, I requested some assistance from the MKL team regarding your issue.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Sabela,

- AO mode available for pretty narrow list of MKL's functions ( like BLAS level 3 or factorization ( LU, QR or Cholesky ) routines).,

- you should also know that for the "small" sizes, AO mode will not work. Please refer to this article to get more info about that:

--Gennady

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

one more tips would be also relevant to this topic - usually to take detailed info about offloading process, I use OFFLOAD_REPORT environment variable.

export OFFLOAD_REPORT=1,2 or 3.

you may find details into intel compiler documentation about how it works.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, thank you for your answers.

I had tried with the OFFLOAD_REPORT variable set to 1,2 and 3, but there was no offloading so it did not report anything.

Regarding the functions with AO and the size, I have used the sizes indicated here http://r.789695.n4.nabble.com/Building-R-for-better-performance-td4686227.html , and the same benchmark has been used in this paper http://ieeexplore.ieee.org/xpls/abs_all.jsp?arnumber=6691695&tag=1 to assess R performance using one Xeon Phi, so I was trying to replicate the results, but none of the function calls in the benchmark result in AO.

Thank you very much again,

Sabela.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I finally managed to make it work but I had to increase the sizes way over the minimum to be able to see the peaks in the micsmc tool because the OFFLOAD_REPORT, even when set to 3, is not showing anything. When using "manual offloading" it works. Do you know what might be happening?

All the best and thank you very much,

Sabela.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Same issue. Not able to automatic offload to work with Revolution R with MKL despite matrix operation on matrix that was 32000X32000 floating point numbers.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I was able to exploit automatic offload in R 3.2.3. I have found some minor discrepancies with respect to the recipe in the article - perhaps this iis the cause of the problem? My experience is summarized below.

In the current version of MKL (11.3.1), the compilation flags are slightly different than in the article cited by the OP. I was able to figure out the correct flags using the MKL link line advisor.

To build R, I used the following configure command arguments:

./configure --with-blas="-L/opt/intel/mkl/lib/intel64 -lmkl_intel_lp64 -lmkl_core -lmkl_intel_thread -lpthread -lm" --with-lapack CC=icc CFLAGS="-O2 -qopenmp -I/opt/intel/mkl/include" CXX=icpc CXXFLAGS="-O2 -qopenmp -I/opt/intel/mkl/include" F77=ifort FFLAGS="-O2 -qopenmp -I/opt/intel/mkl/include" FC=ifort FCFLAGS="-O2 -qopenmp -I/opt/intel/mkl/include" --prefix=/opt/R

After that, I ran "make" and "make install" (the latter as root) and added /opt/R/lib64 to my LD_LIBRARY_PATH and /opt/R/bin to my PATH.

Here is the R program "gemm.R" that I used to test the AO functionality:

require(Matrix)

sink("output.txt")

N <- 16000

cat("Initialization...\n")

a <- matrix(runif(N*N), ncol=N, nrow=N);

b <- matrix(runif(N*N), ncol=N, nrow=N);

cat("Matrix-matrix multiplication of size ", N, "x", N, ":\n")

for (i in 1:5) {

dt=system.time( c <- a %*% b )

gflops = 2*N*N*N*1e-9/dt[3]

cat("Trial: ", i, ", time: ", dt[3], " sec, performance: ", gflops, " GFLOP/s\n")

}

First, I ran it on the CPU with some performance tuning tweaks:

[andrey@cfx R-test]$ export KMP_AFFINITY=compact,1 [andrey@cfx R-test]$ R -q -f gemm.R

And the result was:

[avladim@alma-ata R-test]$ cat output.txt Initialization... Matrix-matrix multiplication of size 16000 x 16000 : Trial: 1 , time: 35.041 sec, performance: 233.7833 GFLOP/s Trial: 2 , time: 35.135 sec, performance: 233.1578 GFLOP/s Trial: 3 , time: 34.959 sec, performance: 234.3316 GFLOP/s Trial: 4 , time: 34.301 sec, performance: 238.8269 GFLOP/s Trial: 5 , time: 34.297 sec, performance: 238.8547 GFLOP/s

Second, I set up automatic offload and some tuning:

[andrey@cfx R-test]$ export MKL_MIC_ENABLE=1 [andrey@cfx R-test]$ export MIC_KMP_AFFINITY=compact [andrey@cfx R-test]$ R -q -f gemm.R

This time, the output was as below:

[andrey@cfx R-test]$ cat output.txt Initialization... Matrix-matrix multiplication of size 16000 x 16000 : Trial: 1 , time: 11.728 sec, performance: 698.4993 GFLOP/s Trial: 2 , time: 7.678 sec, performance: 1066.945 GFLOP/s Trial: 3 , time: 7.802 sec, performance: 1049.987 GFLOP/s Trial: 4 , time: 7.715 sec, performance: 1061.828 GFLOP/s Trial: 5 , time: 7.821 sec, performance: 1047.436 GFLOP/s

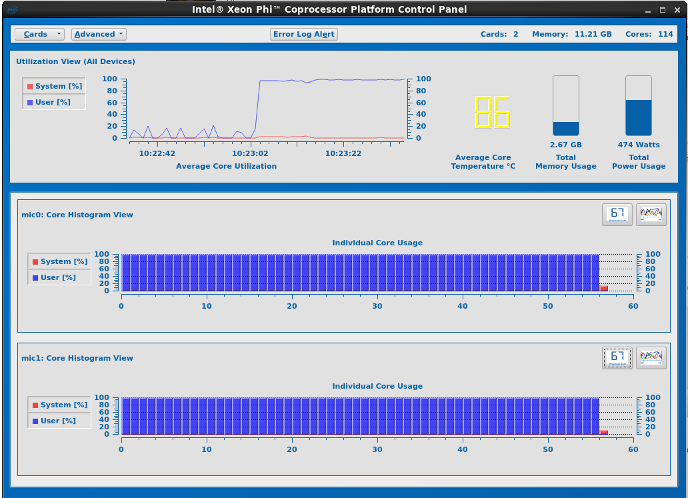

and in the micsmc tool, I could see activity on both of my Xeon Phi cards:

For some reason, the performance numbers that I got with R are around 30% lower than from an equivalent C++ program with the same matrix size (I got 1360 GFLOP/s in C++). Possibly bad alignment in R?

I have verified that AO kicks in for matrix sizes over 1280, as per the article cited above.

My system has a Xeon E5-2630 v2 CPU with enabled hyper-threading and two 3120P Xeon Phis. I am running on CentOS 6.5 with MPSS 3.4.2 and Intel Parallel Studio XE 2016 update 3.

Shameless plug: I will be demonstrating this workload and some other tuning aspects of MKL in this webinar next week: http://colfaxresearch.com/hot-1512/#3

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Andrey,

Thanks for the info, but I was unable (404) to follow your link to the webinar.

Can you please update it? Thanks.

Best,

CB

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hello there

I have set up my Xeon phi 3120A in Windows 10 Pro, with MPSS 3.8.4 and Parallel XE 2017 (Initial Release). I have chosen this Parallel XE as this was the last supported XE for the x100 series. I have installed the MKL version that is packaged with the Parallel XE 2017 (Initial Release).

What have I done / setup:

After setting up MPSS 3.8.4, and following the steps such as flashing and pinging, I have checked that micctrl -s shows “mic0 ready” (with linux image containing the appropriate KNC name), miccheck produces all "passes" and micinfo gives me a reading for all the key stats that the co-processor is providing.

Hence to me it looks like the co-processor is certainly installed and being recognised by my computer. I can also see that mic0 is up and running in the micsmc gui.

I have then set up my environment variables to enable automatic offload, namely, MKL_MIC_ENABLE=1, OFFLOAD_DEVICES= 0, MKL_MIC_MAX_MEMORY= 2GB, MIC_ENV_PREFIX= MIC, MIC_OMP_NUM_THREADS= 228, MIC_KMP_AFFINITY= balanced.

The Problem

When I go to run some simple code in R-3.4.3 (copied below, designed specifically for automatic offload), it keeps running the code through my host computer rather than running anything through the Xeon phi. To support this, I cannot see any activity onthe xeon Phis when I look at the micsmc gui.

The R code (copy from above Andrey's code):

01 |

require(Matrix) |

02 |

sink("output.txt") |

03 |

N <- 16000 |

04 |

cat("Initialization...\n") |

05 |

a <- matrix(runif(N*N), ncol=N, nrow=N); |

06 |

b <- matrix(runif(N*N), ncol=N, nrow=N); |

07 |

cat("Matrix-matrix multiplication of size ", N, "x", N, ":\n") |

08 |

for (i in 1:5) { |

09 |

dt=system.time( c <- a %*% b ) |

10 |

gflops = 2*N*N*N*1e-9/dt[3] |

11 |

cat("Trial: ", i, ", time: ", dt[3], " sec, performance: ", gflops, " GFLOP/s\n") |

12 |

} |

Other steps I have tried:

I then proceeded to set up the MKL_MIC_DISABLE_HOST_FALLBACK=1 environmental variable, and as expected, when I ran the above code, R terminated.

In https://software.intel.com/sites/default/files/11MIC42_How_to_Use_MKL_Automatic_Offload_0.pdf it says that if the HOST_FALLBACK flag is active and offload is attempted but fails (due to “offload runtime cannot find a coprocessor or cannot initialize it properly”), it will terminate the program – this is happening in that R is terminating completely. For completeness, this problem is happening on R-3.5.1, Microsoft R Open 3.5.0 and R-3.2.1 as well.

So my questions are:

- What am I missing to make the R code run on the Xeon phi? Can you please advise me on what I need to do to make this work?

- (linked to 1) is there a way to check if the MKL offload runtime can see the Xeon phi? Or that it is correctly set up, or what (if any) problem that MKL is having initialising the Xeon phi?

Will sincerely appreciate your help – I believe that I am missing a fundamental/simple step, and have been tearing my hair out trying to make this work.

Many thanks in advance,

Keyur

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page