- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

I have 7210 variant of Xeon Phi. Using BIOS, if I choose Quadrant (or Hemisphere - not sure what it means for cluster mode) I get node log which I think belongs to All-2-All.

Does that mean 7210 version of Xeon Phi doesn't support Quadrant mode and it's switching to default mode of All-2-All?

numactl -H

available: 2 nodes (0-1)

node 0 cpus: 0 1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 194 195 196 197 198 199 200 201 202 203 204 205 206 207 208 209 210 211 212 213 214 215 216 217 218 219 220 221 222 223 224 225 226 227 228 229 230 231 232 233 234 235 236 237 238 239 240 241 242 243 244 245 246 247 248 249 250 251 252 253 254 255

node 0 size: 98178 MB

node 0 free: 94617 MB

node 1 cpus:

node 1 size: 16384 MB

node 1 free: 15928 MB

node distances:

node 0 1

0: 10 31

1: 31 10

lscpu

Architecture: x86_64

CPU op-mode(s): 32-bit, 64-bit

Byte Order: Little Endian

CPU(s): 256

On-line CPU(s) list: 0-255

Thread(s) per core: 4

Core(s) per socket: 64

Socket(s): 1

NUMA node(s): 2

Vendor ID: GenuineIntel

CPU family: 6

Model: 87

Model name: Intel(R) Xeon Phi(TM) CPU 7210 @ 1.30GHz

Stepping: 1

CPU MHz: 1224.792

BogoMIPS: 2599.90

Virtualization: VT-x

L1d cache: 32K

L1i cache: 32K

L2 cache: 1024K

NUMA node0 CPU(s): 0-255

NUMA node1 CPU(s)

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Most of questions have been covered already, I'll give the missing answers:

Does that mean 7210 version of Xeon Phi doesn't support Quadrant mode and it's switching to default mode of All-2-All?

numactl -H

...

You are not able to distinguish All-2-All from Quadrant mode with numactl. What you can do is to use hwloc-dump-hwdata tool:

sudo hwloc-dump-hwdata |grep Cluster

Unfortunately you need to have root privileges.

Regarding:

For single NUMA node when I do: numactl -m 0 ./application, then I see that node 1 (MCDRAM) memory is getting filled up, not node 0 (DDR4).

I am not aware of what your application does but this behaviour may be expected. Your benchmark may override policy set by numactl with memkind library or directly use numa API to bind allocations to a particular numa node.

You can easily validate if numactl -m works properly by:

numactl -m1 python -c $'a=[0]*(1<<30);\nwhile 1:pass;'&

and then:

numastat -p $!

please remember to kill the background python process.

Then repeat the test with "-m0".

Hope it helps,

Sebastian

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

read up at e.g./ https://colfaxresearch.com/knl-numa/ :

in Quadrant/Hemisphere mode, the KNL is seen as one NUMA node, but the cachesa are split into4 (or 2) parts; in SNC-4/SNC-2 mode, the CPU is presented as 4 (or 2) separate NUMA nodes.

You'd need to run some cache hit/miss benchmarking tool to find out if the CPU is in all2all, quadrant or hemisphere mode.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi JJK,

Thank you. By cache splitting you mean L2 or when MCDRAM is used as cache or hybrid mode?

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The L2 caches are not affected; I meant the MCDRAM memory which is split into two parts (normally 50/50), so that 50% of the 16 GB of MCDRAM is used as cache, and the other 8 GB is available as (hot swappable) memory. The latter is present in the form of a "memory only numa node".

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>>other 8 GB is available as (hot swappable) memory

It is a distinct memory node. It cannot be hot swapped as it is inside the CPU package.

Many years ago you could add memory as a NUMA memory node via a bus on the system. Some of these configurations were indeed hot swappable, while others were not.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The "hot swappable" attribute is used here to prevent the kernel from using MCDRAM. An example of this use is in the document "Intel Xeon Phi Processor User's Guide" (Revision 2.2, May 2017), where instructions are provided for mapping all 16 GiB of MCDRAM using 16 1GiB pages.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi JJK,

JJK wrote:

The L2 caches are not affected; I meant the MCDRAM memory which is split into two parts (normally 50/50), so that 50% of the 16 GB of MCDRAM is used as cache, and the other 8 GB is available as (hot swappable) memory. The latter is present in the form of a "memory only numa node".

Is this true for flat memory mode with quadrant mode? When in flat, I would expect MCDRAM to be memory not cache? Does quadrant mode over writes this functionality?

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

>>The "hot swappable" attribute is used here to prevent the kernel from using MCDRAM.

True, but it is a misnomer to use that term. Part of the confusion (issue) was Intel's decision to declare MCDRAM RAM (non-cached part) as being a closer NUMA node than the CPU's attached/local memory. Then the O/S defaulting to closer memory as opposed to attached/local.

I think that this is an error (not effective choice) in the O/S design. Let's assume that at some point in the future a CPU provides for very fast static RAM, a reasonable amount, but much less than system memory (10%, 5%, ???).

Would the average HPC user preference be to place the O/S in there, or leave it up to the applications to use?

The best choice would be to have it selectable. Either via BIOS and/or O/S configuration file.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi All,

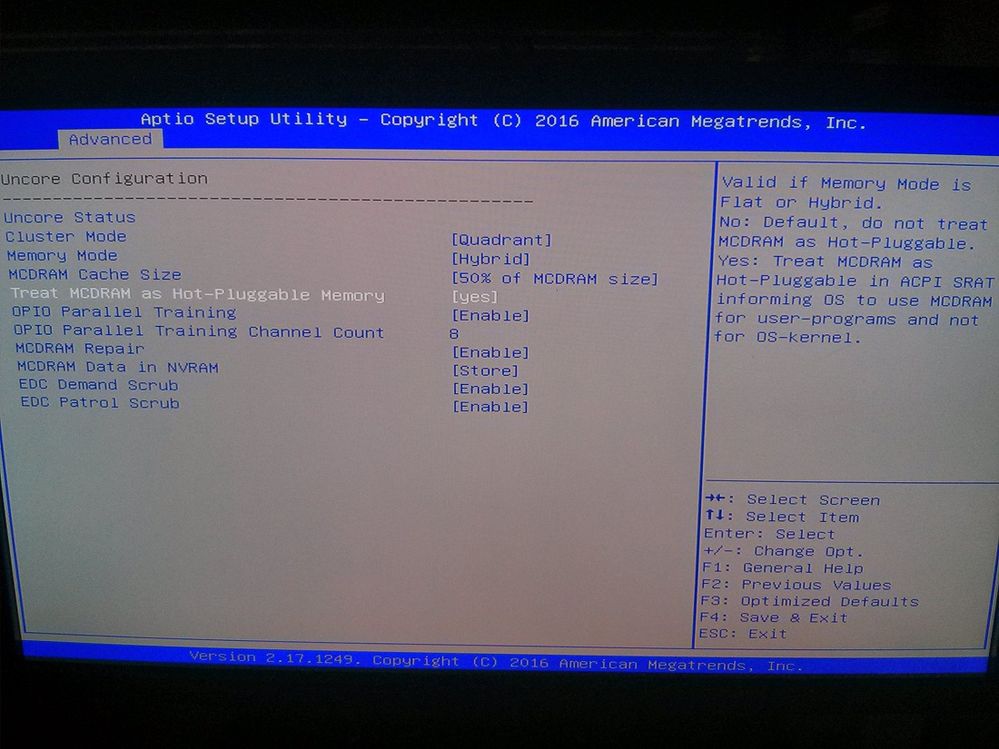

I am not sure the difference between "Hot-Swappable" and "Hot-Pluggable". But below is the screenshot of the BIOS setting stating what happens when "Hot-Pluggable" is set. Please read top right section stating "Hot-Pluggable" makes OS use MCDRAM run user-programs and not OS-kernel.

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is nice to see a BIOS that clearly comments the effects of the option setting.

Thanks for the screen shot.

Jim Dempsey

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

It is important to be clear that KNL memory modes have two independent degrees of freedom.

- MCDRAM can be configured for use as

- directly addressed memory ("Flat mode"),

- as cache for accesses to DDR4 memory ("Cache mode"), or

- statically partitioned between directly accessed memory and cache for access to DDR4 memory ("Hybrid mode").

- The mapping of physical addresses to MCDRAM memory allows the chip to be configured as 1, 2, or 4 NUMA nodes.

- When configured as 1 NUMA node, the mapping of addresses to coherence agents can be either global ("all-to-all") or somewhat localized ("quadrant" mode).

- When configured as 2 NUMA nodes, each NUMA node has its MCDRAM accesses interleaved across 4 of the 8 MCDRAM controllers, and has its DDR4 accesses interleaved across 3 of the 6 DDR4 channels.

- When configured as 4 NUMA nodes, each NUMA node has its MCDRAM accesses interleaved across 2 of the 8 MCDRAM controllers. Two of the 4 NUMA nodes have their DDR4 accesses interleaved across the first 3 of the 6 DDR4 channels, and the other two NUMA nodes have their DDR4 accesses interleaved across the last 3 of the 6 DDR4 channels.

More details are available in a number of places, including TACC's presentation (particularly slides 11-21) at:

https://www.dropbox.com/sh/v8cfjomqravk256/AACxasgvXHDAkHS3E0UzJhvla?dl=0&preview=2_Intro_KNLHW.pdf

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi John,

McCalpin, John wrote:

More details are available in a number of places, including TACC's presentation (particularly slides 11-21) at:

https://www.dropbox.com/sh/v8cfjomqravk256/AACxasgvXHDAkHS3E0UzJhvla?dl=...

In the slide 22, where there is comparison of different cluster-memory modes for different benchmarks, how do you allocate/pin benchmark data to these two regions when in flat mode?

When I am doing experiments, then pinning to DDR4 in flat mode (numactl -m 0 ./benchmark) starts allocation at MCDRAM (node 1) and never uses DDR4, as the data is good to fit within MCDRAM.

Can you please suggest what I may be doing wrong? As I will never get graphs like you are getting unless allocation specifically occurs in MCDRAM or DDR4 for flat mode of MCDRAM.

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Allocation is easy when configured as a single NUMA node -- NUMA node 0 is DDR4 and NUMA node 1 is MCDRAM.

For SNC2 and SNC4 modes, you need to write a wrapper script that computes the MCDRAM node number from the node number used by the cores. As a concrete (but limited) example, consider running an MPI job with 32 MPI tasks on a set of 8 KNL nodes in Flat-SNC4 mode. The command to launch the job would look something like:

mpirun -np 32 my_wrapper.sh my_mpi_executable.exe

In the "my_wrapper.sh" function you need to know the environment variables that your environment uses to convey the MPI rank information to the wrapper shell. In my environment, these look something like:

# for Intel MPI running under SLURM

# the rank number is in $PMI_RANK

# the total number of ranks is $PMI_SIZE

# the number of nodes is $SLURM_NNODES

MY_TASKS_PER_NODE=$(( $PMI_SIZE / $SLURM_NNODES ))

MY_LOCAL_RANK=$(( $PMI_RANK % $MY_TASKS_PER_NODE ))# in Flat-SNC4 mode, the MCDRAM node for node "n" is "n+4"

MY_MEMORY_NODE=$(( $MY_LOCAL_RANK + 4 ))

numactl --membind=$MY_MEMORY_NODE --cpunodebind=$MY_LOCAL_RANK $1

It is not very much fun looking for these environment variables in different environments, but if you know how to run a job it is pretty easy to dump the environment inside each instance of "my_wrapper.sh" to unique files (e.g. using "mktemp") and then looking for the information you need. (This is faster than trying to find the answers in the documentation, in my experience....)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi John,

For single NUMA node when I do: numactl -m 0 ./application, then I see that node 1 (MCDRAM) memory is getting filled up, not node 0 (DDR4).

This mean application allocation is occurring on MCDRAM and not on DDR4 as I wanted?

I also validate this based on package vs dram energy and it shows that when running numactl -m 0 ./application, data allocation is happening on MCDRAM first even though I want it to use DDR4.

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Most of questions have been covered already, I'll give the missing answers:

Does that mean 7210 version of Xeon Phi doesn't support Quadrant mode and it's switching to default mode of All-2-All?

numactl -H

...

You are not able to distinguish All-2-All from Quadrant mode with numactl. What you can do is to use hwloc-dump-hwdata tool:

sudo hwloc-dump-hwdata |grep Cluster

Unfortunately you need to have root privileges.

Regarding:

For single NUMA node when I do: numactl -m 0 ./application, then I see that node 1 (MCDRAM) memory is getting filled up, not node 0 (DDR4).

I am not aware of what your application does but this behaviour may be expected. Your benchmark may override policy set by numactl with memkind library or directly use numa API to bind allocations to a particular numa node.

You can easily validate if numactl -m works properly by:

numactl -m1 python -c $'a=[0]*(1<<30);\nwhile 1:pass;'&

and then:

numastat -p $!

please remember to kill the background python process.

Then repeat the test with "-m0".

Hope it helps,

Sebastian

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Sebastian,

Sebastian S. (Intel) wrote:

You can easily validate if numactl -m works properly by:

numactl -m1 python -c $'a=[0]*(1<<30);\nwhile 1:pass;'&and then:

numastat -p $!

Thank you, this helped.

Step 1:

numactl -m0 python -c $'a=[0]*(1<<30);\nwhile 1:pass;'

numastat -p 7568

Per-node process memory usage (in MBs) for PID 7568 (python)

Node 0 Node 1 Total

--------------- --------------- ---------------

Huge 0.00 0.00 0.00

Heap 1.09 0.00 1.09

Stack 0.04 0.00 0.04

Private 8195.21 0.00 8195.21

---------------- --------------- --------------- ---------------

Total 8196.34 0.00 8196.34

Step 2:

numactl -m1 python -c $'a=[0]*(1<<30);\nwhile 1:pass;'

numastat -p 7551

Per-node process memory usage (in MBs) for PID 7551 (python)

Node 0 Node 1 Total

--------------- --------------- ---------------

Huge 0.00 0.00 0.00

Heap 0.00 1.09 1.09

Stack 0.00 0.04 0.04

Private 1.87 8193.34 8195.21

---------------- --------------- --------------- ---------------

Total 1.87 8194.47 8196.34

Conclusion: numactl tool works as expected.

Benchmarks I am using is Intel® Optimized LINPACK Benchmark for Linux

Thanks.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm glad I could help.

Benchmarks I am using is Intel® Optimized LINPACK Benchmark for Linux

You can take a look at syscalls of this benchmark. You will find a lot of lines like:

mbind(0x7fe90d000000, 8388608, MPOL_PREFERRED, {0x0000000000000002, 000000000000000000}, 128, 0) = 0

what indicates that author manually sets the policy to allocate memory on numa node 1 using 0x0000000000000002 bitmask.

Regards

Sebastian

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page