- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi everyone!

As well as adding TrackingAction face and hand tracking scripts to regular objects in Unity, did you know that you can also add them to user interface (UI) overlays to make menu windows that can be controlled with the body instead of a keyboard and mouse!

The guide below shows how to do this. We are using Unity 5.1 in our example, but it should work fine with the UI system introduced in Unity 4.6.

STEP ONE

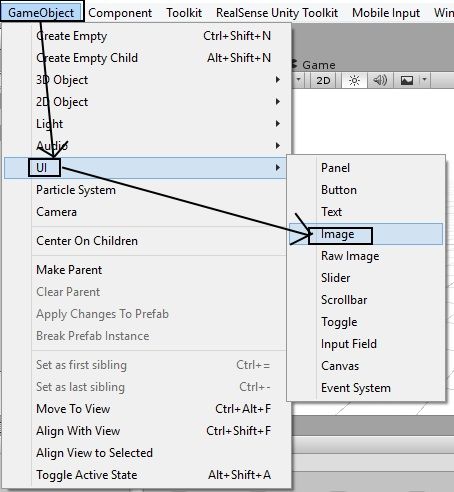

Our first step is to create the UI overlay that will be projected onto the camera view and so cover up anything that is underneath it, like how menus work.

Go to the 'GameObject' menu and select the sub-options 'UI' and 'Image'.

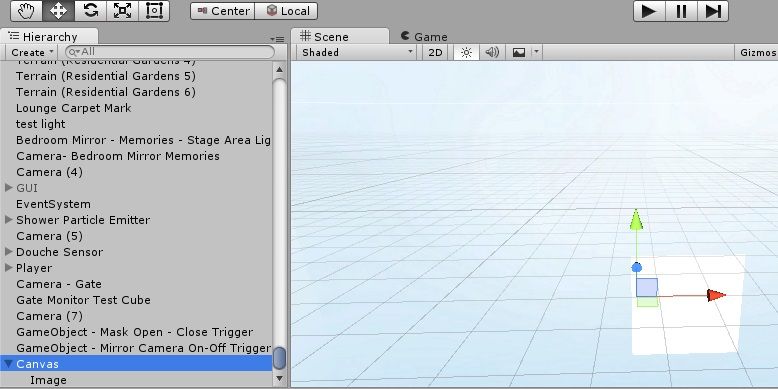

Doing so inserts a “canvas” object into your application – a blank sheet that will be laid on top of the contents of your application.

A special trait of a Canvas object is that it does not have to be placed anywhere near the rest of the contents of your project: because it is projected onto the canvas lens, it will be visible on the screen no matter where it is placed.

Note that the Canvas object has a childed object attached to it called 'Image'. By selecting this object in the Hierarchy panel, you can redefine its default size to the dimensions that you need your UI overlay to have.

STEP TWO

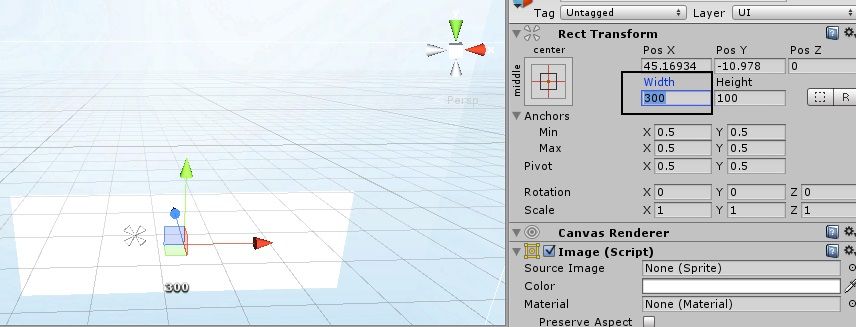

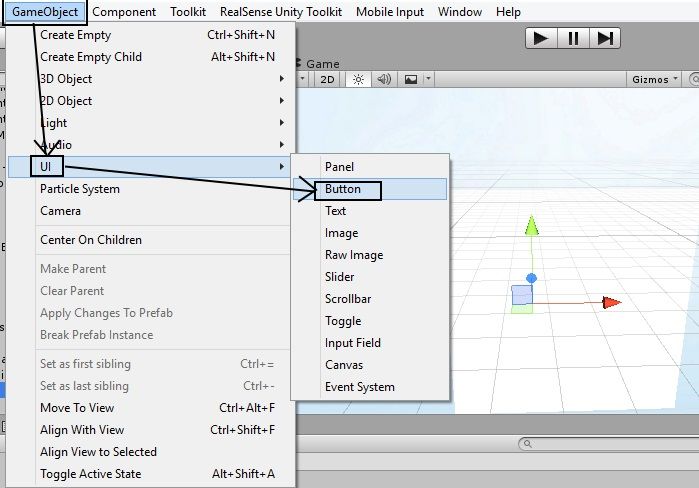

At present, our canvas has nothing on it. Canvases can have buttons, images and text fields attached to them though to decorate and illustrate them. In our example, we are going to add a button.

Go to the 'Component' menu and select the sub-options 'UI' and 'Button' to add a button object to your canvas.

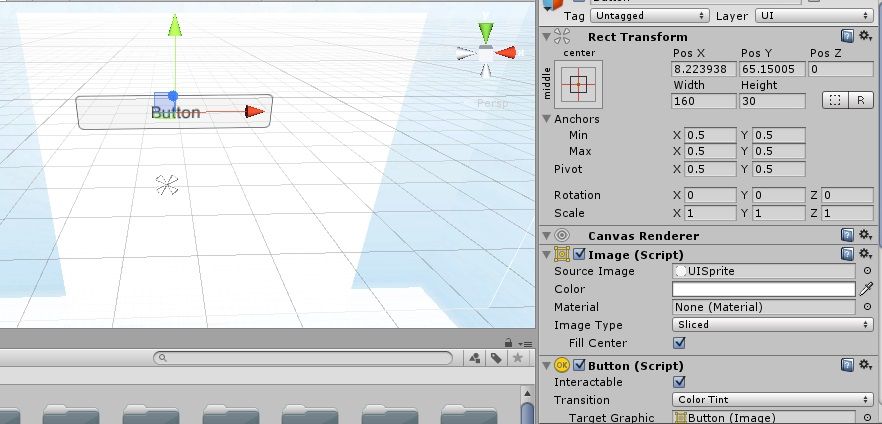

Select the button object in the Hierarchy panel to view its settings.

STEP THREE

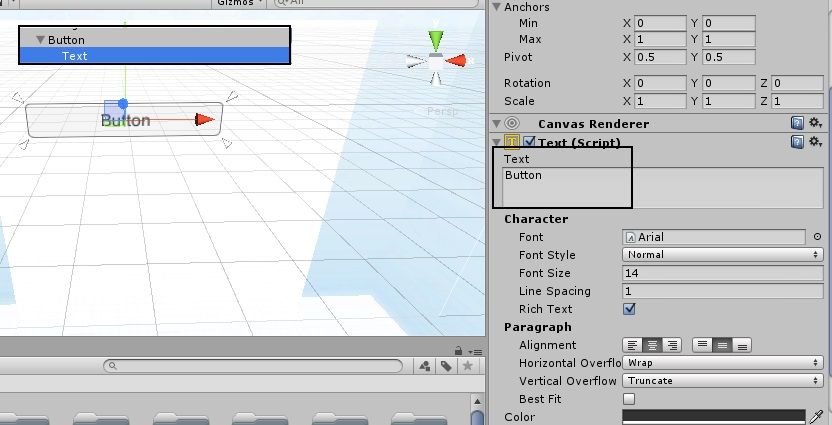

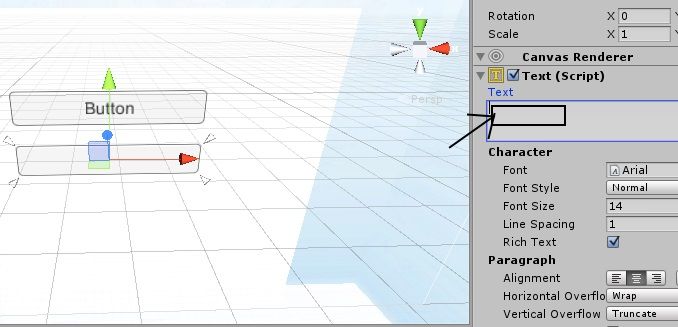

By default, a new button has the text label 'Button' on it. To change this label, expand open the 'Button' object in the Hierarchy panel to reveal a hidden 'Text' object attached to it and display its settings in the Inspector panel.

In the 'Text' text field, you can set your own label for the button that you have created.

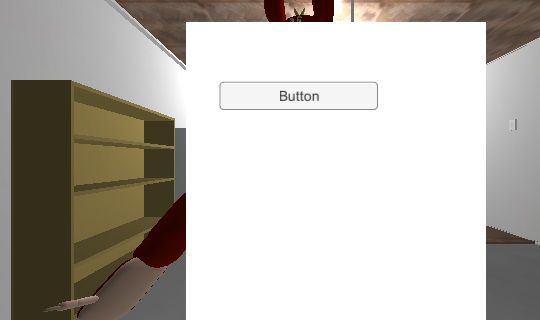

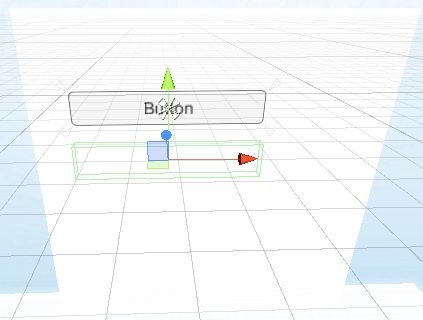

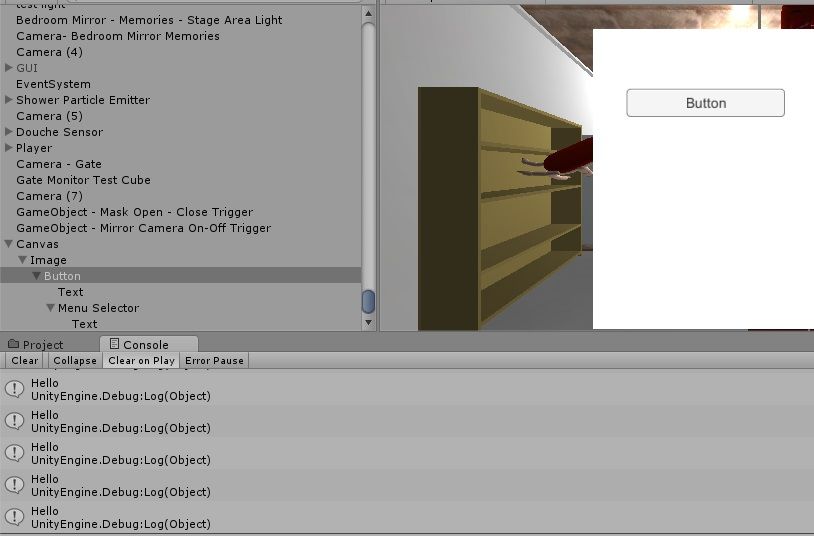

If you do a test run of your project at this point, you will see your GUI overlaid on your project's environment!

STEP FOUR

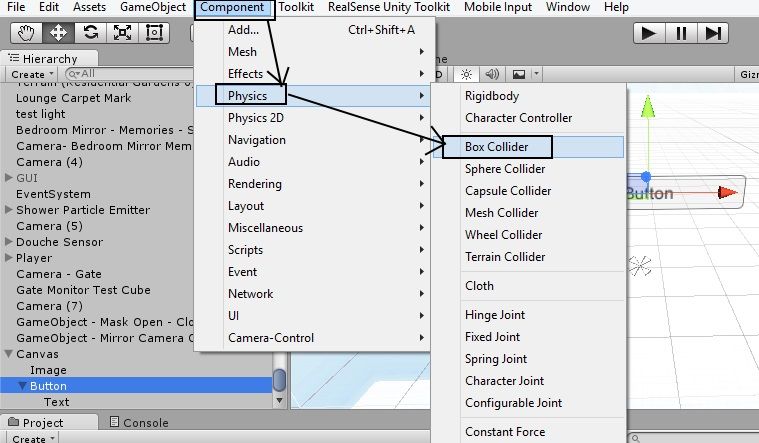

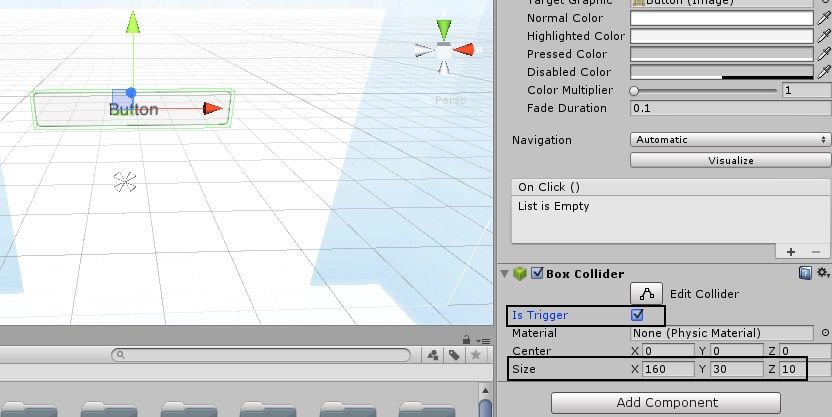

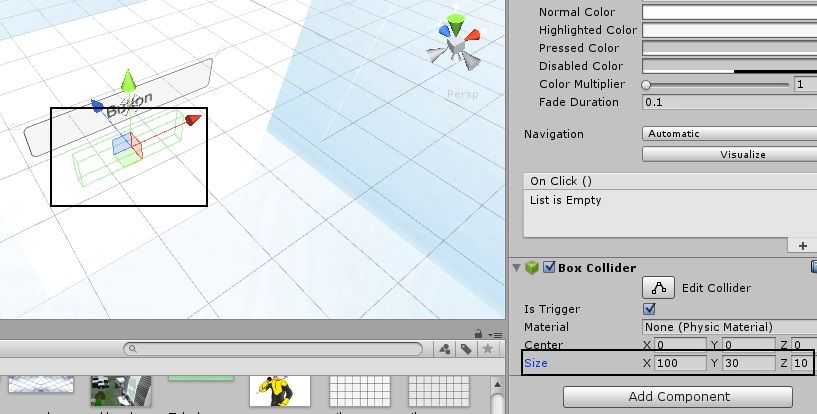

In order for a TrackingAction-equipped menu selector object to interact with buttons, the buttons need to be given a physics Collider field. A 'Box' type collider is the best shape for a menu item because of its flatness on each side.

Select your button object in the Hierarchy panel and then go to the 'Component' menu and select the sub-options 'Physics' and 'Box Collider' to add a Box type collider field around your button.

STEP FIVE

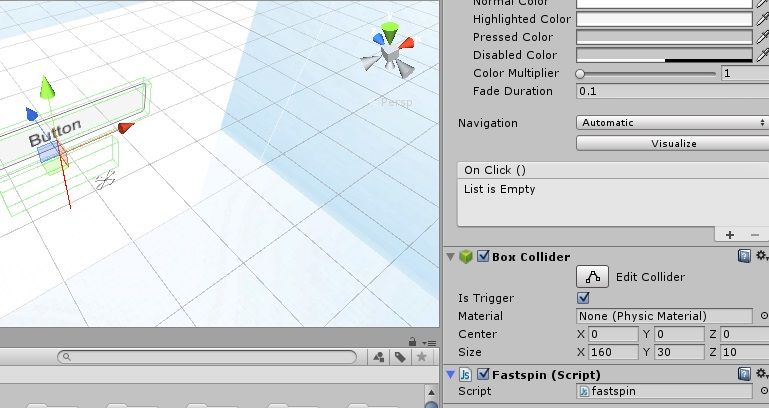

In the Box Collider settings of your button, place a tick mark in the box labeled 'Is Trigger'. Doong so allows objects such as our menu selector to pass through the button without being physically obstructed, and it also tells Unity that an event of some kind should be triggered when the selector interacts with the collider field around the button.

The default size of the collider we have placed around the button is way too small though, so we need to greatly enlarge it by entering new X, Y and Z size values into the collider's settings. We found that X = 160, Y = 30 and Z = 10 works very well with the default button object's size.

STEP SIX

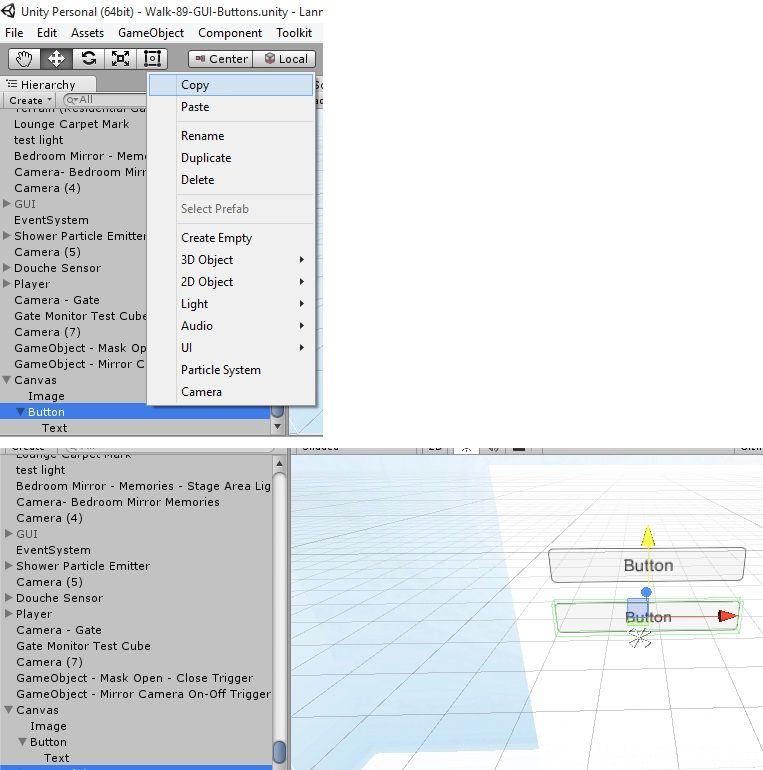

A button on its own cannot do anything though. We need to create the previously mentioned menu selector object to interact with the button's collider field and trigger a programmed event in the button.

Right-click on the button object in the Hierarchy panel and select the 'Copy' menu option, then right-click on the button again and select the 'Paste' option to make a copy of the button that is overlaid perfectly on top of the button. Use the colored arrows to drag the copy downwards a little so that the two are visible as separate objects.

STEP SEVEN

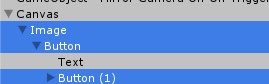

The elements that are displayed on a GUI have their drawing priority set by the order in which they are childed to the parent Canvas object. So Unity will always draw the canvas first. Because the Image object is the first child object of Canvas, that means that the sheet that we are placing our UI elements such as the button on will be drawn second.

What this means is that if the buttons, text labels, etc are childed to the Image object then they will always be drawn on top of the UI sheet. If the buttons were childed to the Canvas and the Image was a child of the buttons, the background image of the sheet would be drawn on top of the buttons and text and hide them.

Because we want our button object and newly created menu selector object to always be on top of the canvas and not behind it, we child the original button to the Image object, and then child the copied button to the original button in a descending hierarchy.

STEP EIGHT

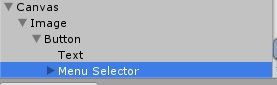

We want our menu selector object to be easily discernable from the button at a glance in our object hierarchy, so we rename it to give it a unique label.

Because we want the selector to be invisible and not obscure the button as it moves over the top of it, we need to make it blank and transparent. The blank part of this task is achieved simply by selecting the 'Text' sub-option of our menu selector in the Hierarchy panel (it was originally a button, remember) and delete the word 'Button' to make the surface of the button empty.

As for making the selector transparent … this is not as straightforward as the normal process for applying textures to objects in Unity. This is because only “Sprite” type textures can be applied to a UI button. A sprite is basically a simple 2D image such as those you see in old videogames such as 'Pac-Man' and 'Space Invaders.'

We already had a transparent texture in our project that we could use for a see-through sprite. If you do not have one, you can easily make one in your art software by doing the following:

(a) Create a new image.

(b) Go to the Edit menu and select the 'Select All' option and then the 'Cut' option to cut away the white background.

(c) Finally, save this cut-out in the 'PNG' file format, which specializes in supporting transparency. If you save it as a jpeg or gif then you will just see the same blank white image that you started with.

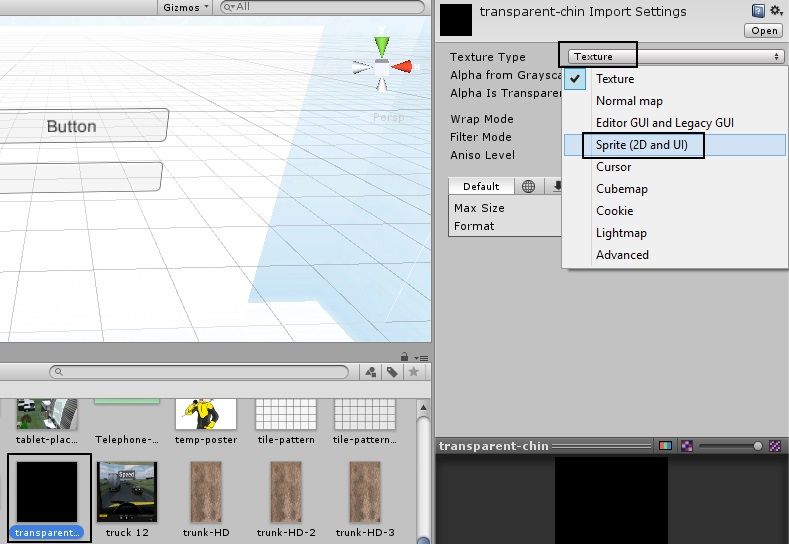

Import the transparent texture file into the 'Assets' panel of Unity and then right-click on it to bring up its settings in the Inspector panel. In the 'Texture Type' option, left-click on the 'Texture' menu option beside it and select 'Sprite (2D And UI) from the menu. This now enables Unity to recognize your image file as a Sprite and make it selectable as a button texture.

STEP NINE

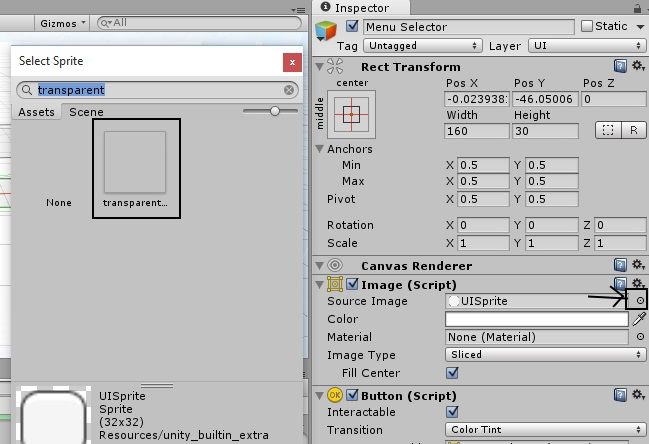

Return to the 'Image' object of your canvas and bring up its settings in the Inspector. On the 'Source Image' option, left-click on the tiny circle at the end of this option to make a sprite selector window pop up, and type the name of your transparent sprite into the search box at the top of this window to find it quickly. Double left-click on it to select it as the texture for your menu selector object.

The menu selector object is now transparent and will not obscure your button when moving over the top of it.

STEP TEN

An object that moves inside the collider field of another object to trigger an effect (as our menu selector object does) often works more effectively if the collider of the entering object is smaller than the collider of the trigger object (i.e our button) so that it fits inside the boundaries of the field better.

To ensure that our selector's collider fits easily inside the button's collider boundaries, we change the selector's collider field size (the collider it inherited when we created the object as a copy of the button) so that X = 100 instead of 160. This makes the selector's field shorter in width than that of the button's.

STEP ELEVEN

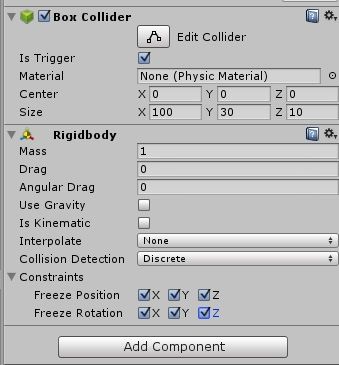

This step is very important. In order for the selector to be able to trigger the effect attached to the button, the selector needs to be given a 'Rigidbody' component by going to the 'Component' menu and selecting the sub-options 'Physics' and 'Rigidbody'.

We recommend un-ticking the Rigidbody's 'Gravity' option to disable it (to stop the selector falling off the screen when the application is run) and place ticks in every one of the position and rotation Constraint settings to stop the selector from being able to move or rotate out of its desired location on the UI canvas.

If you are wondering why we would stop the menu selector object from moving when we want it to be movable to select menu items: the TrackingAction camera control script that we will be using ignores the constraints set in a Rigidbody component by default and moves freely, following the user's camera inputs.

STEP TWELVE

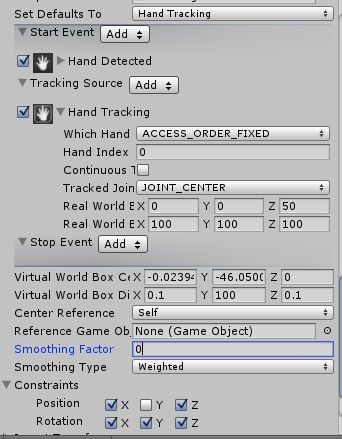

It is now finally time to add a TrackingAction to our menu selector object so that the user can move it using their hand or face inputs!

In this example, we will set the TrackingAction script to track the palm of the hand, and be constrained to position-move up and down by constraining every directional axis except the 'Y' Position constraint.

We recommend using the default Real World Box values, and Virtual World Box values of 0.1, 100, 0.1. Set the weighting to '0' to give the selector minimum resistance to movement.

STEP THIRTEEN

Our menu selector object can now interact with the button object's collider field and trigger an event. However, we need to provide the button with a script to tell it what to do when the trigger is set off by the menu selector object entering its collider's boundaries.

Highlight your button object in the Hierarchy panel and create a new script file inside it that will contain your trigger-event code. In our example, we repurposed our JavaScript file 'Testspin' that we use as a test-bed for new code.

In our test script, we created a JavaScript script with an 'OnTriggerStay' type function. The OnTrigger functions determine what happens when an object enters a collider that is set up as a trigger field.

An 'OnTriggerEnter' function activates the script's contents when an object enters the trigger object's collider field.

An 'OnTriggerExit' function activates the script when an object exits th eboundaries of the field after it has entered it.

And the 'OnTriggerStay' function we are using in our article runs a script for as long as an object is within the inner boundaries of the field.

The Debug.Log (“Hello”); statement in our script is a classic trigger test mechanism that prints the word 'Hello' in the Unity editor's Console debug window when the trigger is successfully initated.

STEP FOURTEEN

When we did a test run of our example application and used the palm of our hand to move the selector backwards and forwards over the button, the message 'Hello' was repeatedly printed in the debug console with each successful activation of the button with our hand movements. Success!

If you would like further advice about the UI technique in this guide, please feel free to comment below!

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Marty,

Nice post, what do you think about creating an article from it? It could reach more people with this cool tutorial. :)

If you are interested don't know how to do it or need help, please let me know.

Regards,

Felipe

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Whether I'm writing on the Intel site or other sites, the decision to do articles vs forum guides, for me, usually comes down to time. I do sometimes write articles for my own site or online publications, but they need a greater amount of preparation and professional presentation, whereas a forum guide can be produced faster as the format is less formal. I looked up the details for contributing articles to the Intel Developer Zone though and will certainly give article production some real thought this week. Thanks! :)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Yes, it's possible to create an interface similar to the Touchless Controller in Unity. There are different ways to approaching the problem. I myself usually use an object representing a pointer that interacts with the collider fields of other objects representing UI elements in order to trigger them (similar to what I did in this article with the menu selector.)

I searched my memory for another how-to guide about my trigger approach that I could link you to, and remembered this instalment from one of my developer diary articles that might be helpful. Episodes 19 to 21, in which I constructed an interactive electronic diary on a simulated tablet handheld in Unity, might be useful. Here's the links:

EPISODE 19: Building the handheld tablet model.

http://sambiglyon.org/?q=node/1261

EPISODE 20: Programming the diary itself.

http://sambiglyon.org/?q=node/1264

EPISODE 21: Completing the setup of the page navigation system for the first 20 entries of the diary.

http://sambiglyon.org/?q=node/1270

A full directory of my company's project diaries (the RealSense one is 'My Father's Face') can be found here:

http://sambiglyon.org?q=node/641

You are free to use any of the information and scripts found in these diaries in your own projects.

Your question also reminded me of a developer in this community called Lance who had used the "raycasting" collision detection technique in Unity to get his selector to interact with controls on a spacecraft. This solution is probably closer to how the Touchless Controller works than my own approach.

https://software.intel.com/en-us/forums/realsense/topic/559636

Best of luck!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you so much Marty, i hope i can work with it. I'll check on every single link above. Thanks again.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Wiko! Feel free to ask questions in the comments any time. :)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Marty, can you help me again? after the Touchless Control module, i wan to make a drag and drop with hand tracking that can drag multi object.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Drag and drop is a tricky request, as I haven't done that in my own project. At least, not in the sense of a traditional software application's drag and drop. In my own application, objects are physically lifted by a hand that grips its fingers round the object and then carries it and opens the fingers to drop it. That may be more complex than what you need?

Another way to do it would be to use a Fixed Joint, so that when you select an item, a script creates Joint between the selector and the object that moves the object anywhere that the selector goes. Then when you want to drop, another script breaks the Fixed Joint to release the object from the selector's hold.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Marty, i think i'll go with the Joint thing. Because i need an object as cursor to take another object in drag and drop.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page