- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi everyone,

By analyzing the code of the Unity TrackingAction face and hand tracking script supplied with the SDK, I have worked out how to switch the TrackingAction's constraints and Inverts on and off with scripting whilst an application is running. This is a feature I have wished for since RealSense was launched.

Here's a full list of the instructions.

CONSTRAINTS

ROTATION

On

Constraints.Rotation.X = true;

Constraints.Rotation.Y = true;

Constraints.Rotation.Z = true;

Off

Constraints.Rotation.X = false;

Constraints.Rotation.Y = false;

Constraints.Rotation.Z = false;

POSITION

On

Constraints.Position.X = true;

Constraints.Position.Y = true;

Constraints.Position.Z = true;

Off

Constraints.Position.X = false;

Constraints.Position.Y = false;

Constraints.Position.Z = false;

INVERTS

POSITION

On

InvertTransform.Position.X = true;

InvertTransform.Position.Y = true;

InvertTransform.Position.Z = true;

Off

InvertTransform.Position.X = false;

InvertTransform.Position.Y = false;

InvertTransform.Position.Z = false;

ROTATION

On

InvertTransform.Rotation.X = true;

InvertTransform.Rotation.Y = true;

InvertTransform.Rotation.Z = true;

Off

InvertTransform.Rotation.X = false;

InvertTransform.Rotation.Y = false;

InvertTransform.Rotation.Z = false;

1. CONSTRAINTS

We will begin by looking at the Constraint settings.

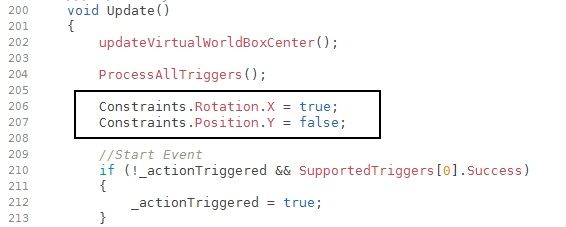

In the first test that I tried, I placed some sample instructions at the beginning of the TrackingAction's Update function, starting at line 200:

All that this achieves though is to automatically enable or disable constraints when the application is run. You could get the same effect by manually configuring the constraints before running the application. What we really need to do in order to make the instructions useful is to incorporate them into conditional statements such as "If" so that the constraints are only changed when a particular event occurs.

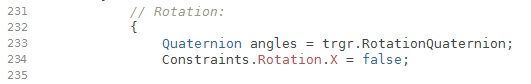

In the next test, I incorporated into the TrackingAction's main object rotation routine at line 232 an instruction that unlocked the X rotation constraint (which was set as locked before the program was run) when tracking of the hand or face was acquired. When tracking was lost, the X rotation constraint automatically locked again.

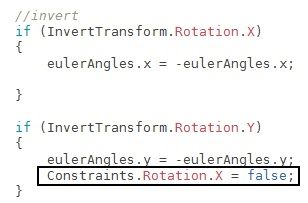

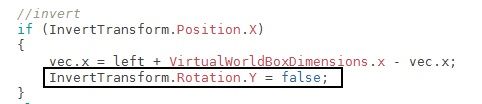

It also occurred to me that it would be useful to be able to change constraints when certain Inversion axes were set. In the example below, the constraint-changing instruction was incorporated into the inversion code beginning at line 250 of the TrackingAction. An instruction was inserted into the code controlling the inversion of the Y axis so that when Y was set as inverted, the X rotation axis would be unlocked and then lock again when tracking was lost.

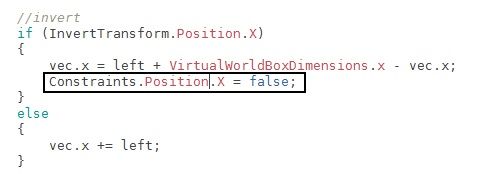

In the final example, an instruction was added to the Position-controlling code beginning at line 324 to unlock the X Position axis if the X Position axis was inverted.

2. INVERTS

The way that invert changing instructions are inserted into the TrackingAction code is practically identical to the Constraint examples above. So we'll just do one quick example. In this example, when the X Postion axis is inverted, the Y Rotation axis is unlocked.

A key difference between this test and the earlier Constraint ones was that when tracking was lost, the changed invert axis did not revert back to its original setting.

CONCLUSION

Have a play around with the instructions and see how you can use them to adjust the TrackingAction to achieve the constraint and invert manipulation effects that you desire.

You could also place the Constraint or Invert changing instruction in a new function in the TrackingAction - e.g "void InvertJoint()" -that does not run automatically when the script starts up and can only be activated by making a call to that specific function. You could then write a separate C# script that sends an activation call to that part of the TrackingAction, using a line like the one below:

GameObject.Find("NAME OF OBJECT").GetComponent<TrackingAction>().InvertJoint();

Or if your separate script is inside the same object as the TrackingAction, you can use a simpler version:

GetComponent<TrackingAction3>().InvertJoint();

In previous GameObject statements you may have used in the past, you may be used to only referencing a single function (e.g '<TrackingAction>(). By adding the name of a sub-function within the main script after the name of that script, you can jump straight to a specific section of a script instead of having to run the entire script.

Best of luck! If you have questions, please feel free to ask them in the comments below.

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Further research revealed how to change other conditions in the TrackingAction whilst an application is running.

SMOOTHING VALUE

The instruction for changing smoothing is SmoothingFactor = (number you want to change the smoothing to);

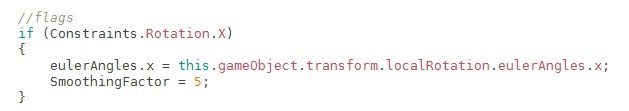

In the example below, if X Rotation is set as constrained then when tracking starts, the Smoothing value is automatically changed to '5.'

A possible application of this would be to create a script that increases the smoothing value to '19' to make the arms of an avatar move very slowly when a very heavy object is held in the avatar's hand(s).

SMOOTHING TYPE

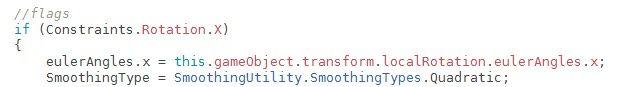

You might also want to change the Smoothing type of a particular object so that it moves differently when a certain condition is met. In the example below, the Smoothing Type is changed to 'Stabilizer' when X Rotation is constrained.

The instructions for each particular Smoothing Type are:

Spring:

SmoothingType = SmoothingUtility.SmoothingTypes.Spring;

Stabilizer:

SmoothingType = SmoothingUtility.SmoothingTypes.Stabilizer;

Weighted:

SmoothingType = SmoothingUtility.SmoothingTypes.Weighted;

Quadratic

SmoothingType = SmoothingUtility.SmoothingTypes.Quadratic;

In the example below, the Smoothing Type is changed to Quadratic when X Rotation is contained and tracking starts:

CALLING AN ISOLATED FUNCTION FROM ANOTHER SCRIPT

Earlier in this article, we mentioned the possibility of putting instructions to change a TrackingAction setting inside a specially created function that could only be activated when a call from an external script is made to it (i.e the TrackingAction would normally ignore it so that its functioning was not impeded.)

Research into how to do this found that it was difficult to place such a self-created instruction within the TrackingAction's code, as it seems to contain a lot of "protected areas" that cannot be accessed from outside of the TrackingAction. What we need to do, then, is place our new function outside of the walls of these protected areas so that communications sent from outside of the TrackingAction can reach it.

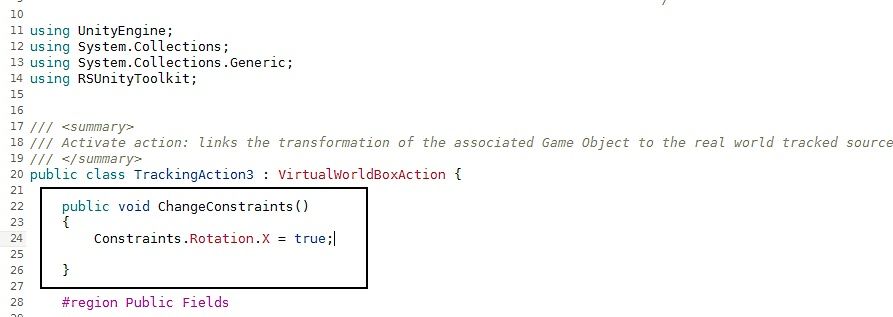

Through experimentation, I found that the best place seemed to be to put it straight after line 20 (the main 'class' definition of the TrackingAction. By doing so, our special routine is outside of everything that the TrackingAction is processing when it is running, because that code does not appear in the script until after our routine, further down the listing.

In my test setup, I created a function called ChangeConstraints() and placed a line of code in it that would place a tick in the X Rotation constraint of the TrackingAction when tracking was Started, freezing that rotational axis.

I now needed a way to externally make an activation call to that particular function inside the TrackingAction. To do this, I created a basic test cube called 'Cubey1' and placed a TrackingAction inside it. Inside the same cube, I created a simple C# script file called 'Testo' that, when run, would send an activation call to my ChangeConstraints() function inside the TrackingAction code.

Here is the C# code of the 'Testo' script.

using UnityEngine;

using System.Collections;

public class testo : MonoBehaviour {

void Start () {

GameObject.Find("Cubey1").GetComponent<TrackingAction3>().ChangeConstraints();

}

}

In other words, the formula for the line is:

GameObject.Find("NAME OF OBJECT").GetComponent<TrackingAction>().NAME OF YOUR FUNCTION();

When I ran my project in th eUnity editor and manually placed a tick in the box beside the 'Testo' script to activate it, a constraint tick immediately appeared in the X Rotation section of the TrackingAction's constraints, indicating that this axis had now been locked thanks to my external script's call to the TrackingAction.

There are numerous practical applications for this technique. For example, the external script could use an OnTrigger type function so that when a TrackingAction-powered object makes contact with a trigger-equipped collider field, a particular constraint is enabled.

This could be used to stop Trackingaction-driven facial features on an avatar from moving a lot when the user's head is lowered or raised, if triggers were placed in front and behind the avatar's head. When the avatar head made contact with the trigger field during lifting or lowering, an activation instruction could be sent to special functions inside the TrackingAction of each specific facial object, switching on a constraint or constraints so that movement of that object is frozen until the head moves outside of that trigger field again, stopping the facial feature from moving even if the head is continuing to move after it has passed through the trigger field.

I'm sure you can think of plenty of uses for this technique for your own projects!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Continuing the research ... the TrackingAction script does not act in total isolation. It also draws data for its configuration from other script files in the Action folder of the Unity Toolkit, such as HandTrackingRule.cs (located in the folder RSUnityToolkit > Internals > Rules). Settings contained here include the default values of the Real World Box and the type of joint tracking being used (set in this script to Wrist by default.)

This means that by also making edits to these scripts, there is additional potential for customizing tracking to your project needs.

Important note: it is worth bearing in mind that unlike TrackingAction editing, where you can create a number of different versions of the TrackingAction with different configurations, if you edit one of the support scripts such as HandTrackingRule then the change will be universally applied to every one of your TrackingActions, since they all draw their data from the same file.

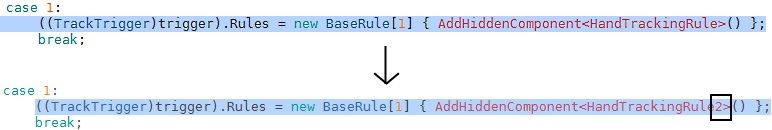

This can be averted though if you create copies of the support script (e.g HandTrackingRule2 and HandTrackingRule3) and change the reference on line 120 of TrackingAction to point to a particular copy.

Bear in mind that edits to this script will only affect hand tracking, as the name of the script (HandTrackingRule) suggests. To affect the face tracking rule, you need to edit the face version of this script, FaceTrackingRule, which is in the same 'Rules' folder in your project.

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page