Authors: Bhargavi Karumanchi, Mengni Wang, Feng Tian, Haihao Shen, Saurabh Tangri

Guest Authors from Microsoft: Wenbing Li

ONNX (Open Neural Network Exchange)

ONNX is an open format to represent both deep learning and traditional models. ONNX is developed and supported by a community of partners such as Microsoft, Facebook, and AWS. At a high level, ONNX is designed to express machine learning models while offering interoperability across different frameworks. ONNXRuntime is the runtime library that can be used to maximize performance of Intel hardware for ONNX inference.

Quantization

Quantization is the replacement of floating-point arithmetic computations (FP32) with integer arithmetic (INT8). Using lower-precision data reduces memory bandwidth and accelerates performance.

8-bit computations (INT8) offer better performance compared to higher-precision computations (FP32) because they enable loading more data into a single processor instruction. Using lower-precision data requires less data movement, which reduces memory bandwidth.

Intel® Deep Learning Boost (Intel® DL Boost)

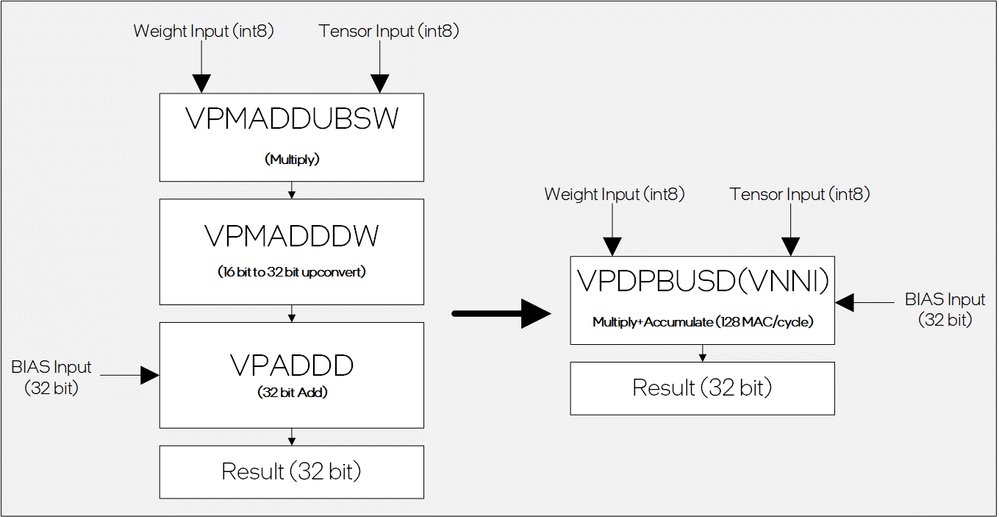

Intel® Deep Learning Boost (Intel® DL Boost) is a hardware acceleration feature available in second-generation Intel® Xeon® scalable processors to increase performance of deep learning workloads. Intel DL Boost Vector Neural Network Instructions (VNNI) delivers 3X performance improvement by combining three instructions into one for deep learning computations, thereby reducing memory bandwidth and maximizing compute efficiency and cache utilization.

Quantization can introduce accuracy loss because fewer bits limit the precision and range of values. However, researchers have extensively demonstrated that weights and activations can be represented using 8-bit integers (INT8) without incurring significant loss in accuracy. Techniques such as post training quantization (PTQ) and quantization aware training (QAT) can recover loss in accuracy due to quantization. These techniques are available in an Intel supported open-source tool “Intel® Neural Compressor.”

Intel® Neural Compressor

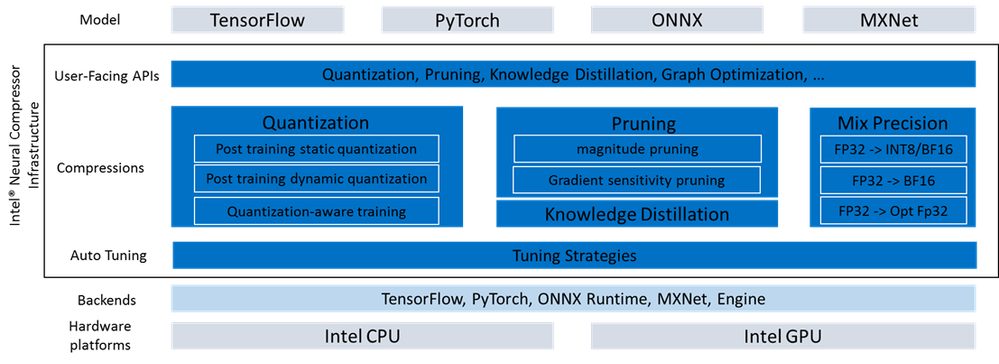

Intel® Neural Compressor (formerly known as Intel® Low Precision Optimization Tool) is an open-source Python tool, which delivers unified interface to support multiple deep learning frameworks. It can be used to apply key model optimization techniques, such as quantization, pruning, knowledge distillation to compress models. This tool makes it easy to implement accuracy-driven tuning strategies to help user create highly optimized AI models. It has support for multiple weight pruning algorithms, which generate pruned models with predefined sparsity goals. This tool can also be used to apply knowledge distillation to distill the knowledge from the teacher model to a student model.

As shown in Figure 2, Intel® Neural Compressor is built on the top of frameworks and relies on framework interfaces to execute model training/inference/quantization/evaluation.

INC Infrastructure & Workflow

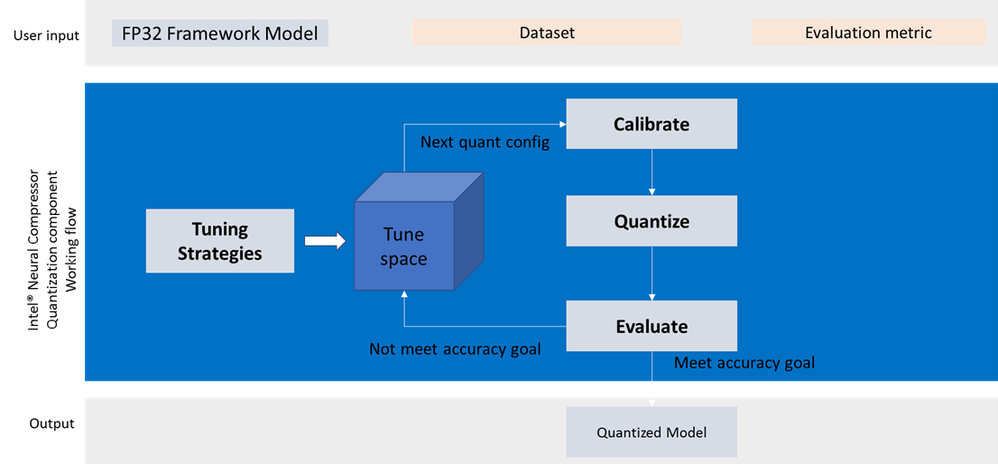

In Figure 3, user provides an FP32 model and the targeted accuracy to the tool. Intel® Neural Compressor Quantization generates a tuning strategy based on the framework quantization capabilities, and model information. The tool picks a quantization scheme which produces the most optimized model for target accuracy.

Quantizing ONNX Models Using Intel® Neural Compressor

In this tutorial, we will show step-by-step how to quantize ONNX models with Intel® Neural Compressor.

Intel® Neural Compressor takes FP32 model and YAML configuration file as two inputs. To construct the quantization process, users can either specify below settings via YAML or

Python APIs:

- Calibration Dataloader (Needed for static quantization)

- Evaluation Dataloader

- Evaluation Metric

Below is an example of how to enable Intel® Neural Compressor on MobileNet_v2 with built-in data loader, dataset, and metric

1. Prepare quantization environment

# bash command

pip install onnx==1.7.0

pip install onnxruntime==1.6.0

pip install neural-compressor

2. Prepare a config file (YAML)

# conf.yaml

model:

name: mobilenet_v2

framework: onnxrt_qlinearops

quantization:

calibration:

sampling_size: 100

dataloader:

dataset:

ImagenetRaw:

data_path: /path/to/calibration/dataset

image_list: /path/to/calibration/label

transform:

ResizeCropImagenet:

height: 224

width: 224

mean_value: [0.485, 0.456, 0.406]

evaluation:

accuracy:

metric:

topk: 1

dataloader:

dataset:

ImagenetRaw:

data_path: /path/to/evaluation/dataset

image_list: /path/to/evaluation/label

transform:

ResizeCropImagenet:

height: 224

width: 224

mean_value: [0.485, 0.456, 0.406]

3. Invoke the quantize() API

# main.py

import onnx

from neural_compressor.experimental import Quantization, common

model = onnx.load('./mobilenet_v2.onnx')

quantizer = Quantization('./conf.yaml')

quantize.model = common.Model(model)

q_model = quantizer()

q_model.save('./outputs/')

Results

Below is the table of quantization results by Intel® Neural Compressor. For the full validated model list, refer to this GitHub page.

Framework |

Version |

Model |

Accuracy |

Performance/ICX8380/1s4c10ins1bs/throughput(samples/sec) |

||||

INT8 |

FP32 |

Accuracy Ratio[(INT8-FP32)/FP32] |

INT8 |

FP32 |

Performance

|

|||

onnxrt |

1.8.0 |

alexnet |

54.68% |

54.80% |

-0.22% |

1195.53 |

626.44 |

1.91x |

onnxrt |

1.8.0 |

bert_base_mrpc_dynamic |

84.56% |

86.03% |

-1.71% |

341.47 |

144.42 |

2.36x |

onnxrt |

1.8.0 |

bert_base_mrpc_static |

85.29% |

86.03% |

-0.86% |

683.8 |

294.99 |

2.32x |

onnxrt |

1.8.0 |

bert_squad_model_zoo |

80.43 |

80.67 |

-0.29% |

106.91 |

59.97 |

1.78x |

onnxrt |

1.8.0 |

caffenet |

56.22% |

56.27% |

-0.09% |

1739.77 |

564.82 |

3.08x |

onnxrt |

1.8.0 |

distilbert_base_mrpc |

84.56% |

84.56% |

0.00% |

1626.07 |

554.5 |

2.93x |

onnxrt |

1.8.0 |

googlenet-12 |

67.73% |

67.78% |

-0.07% |

928.78 |

717.07 |

1.30x |

onnxrt |

1.8.0 |

gpt2_lm_head_wikitext_model_zoo |

32.07 |

28.99 |

10.61% |

1.46 |

1.3 |

1.12x |

onnxrt |

1.8.0 |

mobilebert_mrpc |

84.31% |

86.27% |

-2.27% |

766.17 |

649.96 |

1.18x |

onnxrt |

1.8.0 |

mobilebert_squad_mlperf |

89.84 |

90.02 |

-0.20% |

91.06 |

81.05 |

1.12x |

onnxrt |

1.8.0 |

mobilenet_v2 |

65.19% |

66.92% |

-2.59% |

2678.31 |

2807.88 |

0.95x |

onnxrt |

1.8.0 |

mobilenet_v3_mlperf |

75.51% |

75.75% |

-0.32% |

2960.51 |

1881.47 |

1.57x |

onnxrt |

1.8.0 |

resnet_v1_5_mlperf |

76.07% |

76.47% |

-0.52% |

884.23 |

497.15 |

1.78x |

onnxrt |

1.8.0 |

resnet50_v1_5 |

72% |

72% |

0% |

855.49 |

493.39 |

1.73x |

onnxrt |

1.8.0 |

resnet50-v1-12 |

74.83% |

74.97% |

-0.19% |

1008.72 |

520.8 |

1.94x |

onnxrt |

1.8.0 |

roberta_base_mrpc |

88.24% |

89.46% |

-1.36% |

724.68 |

284.01 |

2.55x |

onnxrt |

1.8.0 |

shufflenet-v2-12 |

66.15% |

66.35% |

-0.30% |

4502.48 |

2721.01 |

1.65x |

onnxrt |

1.8.0 |

squeezenet |

56.48% |

56.85% |

-0.65% |

5008.01 |

3629.11 |

1.38x |

onnxrt |

1.8.0 |

ssd_mobilenet_v1 |

22.47% |

23.10% |

-2.73% |

730.17 |

627.5 |

1.16x |

onnxrt |

1.8.0 |

ssd_mobilenet_v2 |

23.90% |

24.68% |

-3.16% |

558.03 |

446.69 |

1.25x |

onnxrt |

1.8.0 |

vgg16 |

66.55% |

66.68% |

-0.19% |

145.1 |

122.7 |

1.18x |

onnxrt |

1.8.0 |

vgg16_model_zoo |

72.32% |

72.38% |

-0.08% |

253.32 |

121.09 |

2.09x |

onnxrt |

1.8.0 |

zfnet |

55.84% |

55.97% |

-0.23% |

536.71 |

336.96 |

1.59x |

Conclusion

By leveraging Intel® Neural Compressor, we achieved less than 1% accuracy loss and gained significant speedup in INT8 model performance compared to the FP32 model. We continue expanding the quantized model scope and contribute to ONNX model zoo.

Please send your pull requests for review if you have improvements to Intel® Neural Compressor. If you have any suggestions or questions, please contact inc.maintainers@intel.com.

Notices & Disclaimers

Performance varies by use, configuration and other factors. Learn more at www.Intel.com/PerformanceIndex.

Performance results are based on testing as of dates shown in configurations and may not reflect all publicly available updates. See backup for configuration details. No product or component can be absolutely secure.

Your costs and results may vary.

Intel technologies may require enabled hardware, software or service activation.

© Intel Corporation. Intel, the Intel logo, and other Intel marks are trademarks of Intel Corporation or its subsidiaries. Other names and brands may be claimed as the property of others.

You must be a registered user to add a comment. If you've already registered, sign in. Otherwise, register and sign in.