- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

What is the best way to copy ~40 GB of data stored on a local drive to Jupyter notebook (https://notebooks.edge.devcloud.intel.com/)? When I tried to use the GUI file upload option, I get a sync error. Is this because we are not meant to copy such a large dataset or I need to use an alternative method?

I tried cloning a data repository using Intel's github but there seems to be an IP number restriction that prevents me from doing that.

I also tried to use a public github repository but then I hit the 500 MB data storage limit on Github.

I am also wondering if it is at all possible to use Intel DevCloud Jupyter environment to analyze such large datasets. Any thoughts?

Any suggestion will be much appreciated.

Regards,

Cagri

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Cagri_T_Intel

There are a few ways to upload files to Intel® DevCloud for the edge.

- Click on the upload button in the Jupyter notebook, to upload a large file, my advice is to break down the file into small sizes (preferably less than 500MB each file).

- For GitHub/git repository advisable to set your repository to public and make sure does not contain any sensitive information.

- Use any downloading tool such as wget, you can upload the file into a small file size and download it using wget if the large file size shows a connection issue.

Hope this information help

Thank You

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Hari,

Thank you so much for your suggestions.

I have tried them all and following were my observations, which I hope will help you help me with this :

1 - Click on the upload button in the Jupyter notebook, to upload a large file, my advice is to break down the file into small sizes (preferably less than 500MB each file).

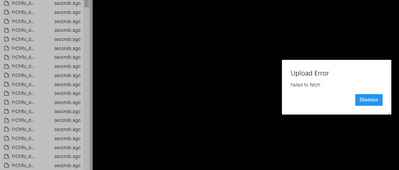

I tried this. Each file was under 600 kB in size and I have 13,000 such files to upload. After copying 8804 files (in no particular order), I get the following error.

I thought of trying to copy all source files again but the problem with that approach is the Jupyter interface asks whether to overwrite every single file one by one (with no option of bulk confirmation !), and therefore this is not practical at all.

2 - For GitHub/git repository advisable to set your repository to public and make sure does not contain any sensitive information.

Following is the repository I have created for this file upload on Github. It is specified as "Internal" not "Private" and is under internal repo. (I also tried to create a public Github repo using my personal account but as I described in my first message, even with a paid Pro account, I will not be able to store data larger than 2GB, which is not going to solve my problem)

<Removed Internal Information>

I would expect inter-sandbox to easily talk to anything in Intel DevCLoud but that does not seem to be the case (based on what I have been seeing, there seems to be firewalls between Intel devcloud and internal repo. That makes no sense to me.)

<Removed Internal Information>

3 - Use any downloading tool such as wget, you can upload the file into a small file size and download it using wget if the large file size shows a connection issue.

My understanding is I cannot use wget to directly upload from my local hard drive. This command can get data stored on the web as I understand (i.e. I need to specify http links to where the data is). I tried this method on public storage such as Google Drive but the share links did not work for some reason. Do you have an alternative suggestion on cloud storage that will work with wget AND will allow me to store ~40 GB on it?

Thank you once again with this Hari and I hope you will be able to help me with further suggestions/guidance on this as I am running out of ideas and will probably end up looking for solutions to this outside Intel DevCloud at all.

Regards,

Cagri

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Cagri_T_Intel

After checking with the developer, it is best to compress the large model into a single file and upload the compressed file.

If you are still facing any issues, please provide us with how long did it take to fail and a screenshot of the error message.

Hope this information help

Thank you

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Cagri_T_Intel

This thread will no longer be monitored since we have provided a solution. Please submit a new question if you need any additional information from Intel.

Thank you

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page