- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

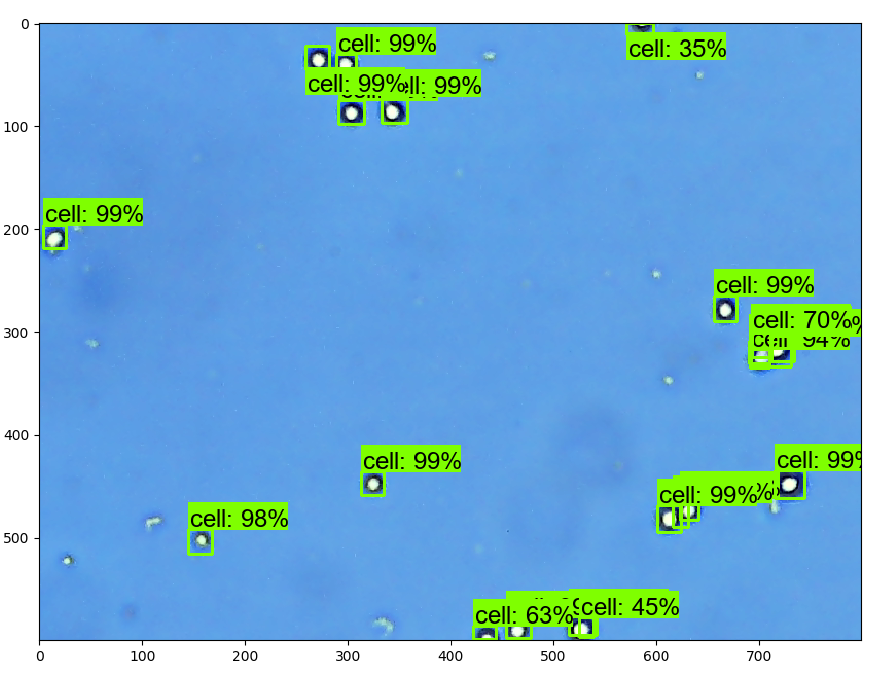

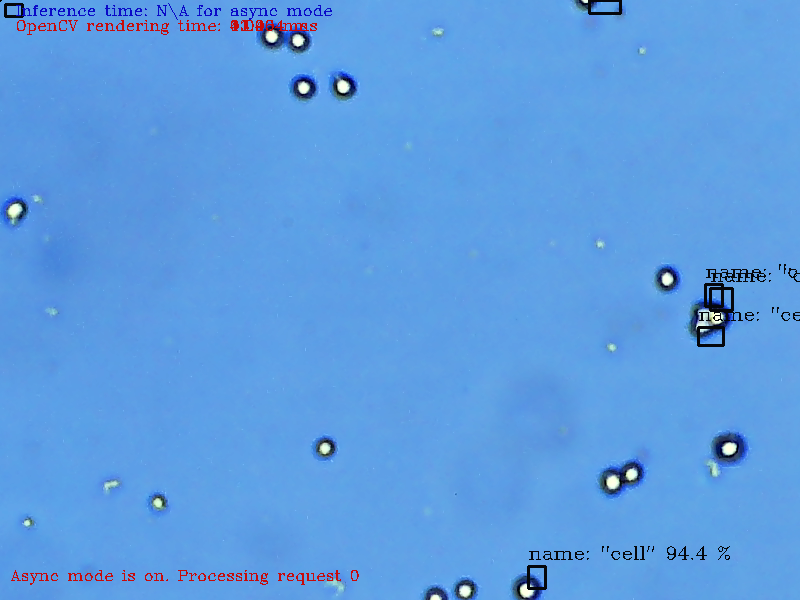

I trained a faster rcnn model to detect cells from images using tensorflow api, and the performance is quite well.

Than I converted the frozen_inference_graph.pb to frozen_inference_graph.xml, using model optimizer:

python "C:\\Program Files (x86)\\IntelSWTools\\openvino_2020.1.033\\deployment_tools\\model_optimizer\\mo_tf.py" --input_model "F:\\data\\cellcounter\\FRCNN\\crop\\training\\frozen_inference_graph.pb" --input_shape [1,600,800,3] --tensorflow_object_detection_api_pipeline_config "F:\\data\cellcounter\\FRCNN\\crop\\training\\pipeline.config" --tensorflow_use_custom_operations_config "C:\\Program Files (x86)\\IntelSWTools\\openvino_2020.1.033\\deployment_tools\\model_optimizer\\extensions\\front\\tf\\faster_rcnn_support_api_v1.14.json" --output_dir="F:\data\cellcounter\FRCNN\crop"

However, the detecion results was really bad.

Does anyone know why? Appreciate it.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Yangzhen Wong,

We suspect the performance variation is due to conversion problems from TensorFlow to Intermediate Representation (IR).

Hence, I would suggest you try adding the --reverse_input_channels parameter in your Model Optimizer conversion command.

More information is available at the following pages :

Also, I would encourage you to try out Intel® Distribution of OpenVINO™ Toolkit version 2020.3 LTS, which is a vastly improved version with latest features and leading performance.

Regards,

Munesh

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

from __future__ import print_function

import sys

import os

from argparse import ArgumentParser, SUPPRESS

import cv2

import time

import logging as log

from openvino.inference_engine import IENetwork, IECore

def build_argparser():

parser = ArgumentParser(add_help=False)

args = parser.add_argument_group('Options')

args.add_argument('-h', '--help', action='help', default=SUPPRESS, help='Show this help message and exit.')

args.add_argument("-m", "--model", help="Required. Path to an .xml file with a trained model.",

default='F:\\data\\cellcounter\\FRCNN\\crop\\frozen_inference_graph.xml', type=str)

args.add_argument("-i", "--input",

help="Required. Path to video file or image. 'cam' for capturing video stream from camera",

default='F:\\data\\cellcounter\\FRCNN\\crop\\labeled\\test\\191218-c2 (11).png', type=str)

args.add_argument("-l", "--cpu_extension",

help="Optional. Required for CPU custom layers. Absolute path to a shared library with the "

"kernels implementations.", type=str, default=None)

args.add_argument("-d", "--device",

help="Optional. Specify the target device to infer on; CPU, GPU, FPGA, HDDL or MYRIAD is "

"acceptable. The demo will look for a suitable plugin for device specified. "

"Default value is CPU", default="CPU", type=str)

args.add_argument("--labels", help="Optional. Path to labels mapping file", default='F:\\data\\cellcounter\\FRCNN\\crop\\label_map.pbtxt', type=str)

args.add_argument("-pt", "--prob_threshold", help="Optional. Probability threshold for detections filtering",

default=0.1, type=float)

args.add_argument("--no_show", help="Optional. Don't show output", action='store_true')

return parser

log.basicConfig(format="[ %(levelname)s ] %(message)s", level=log.INFO, stream=sys.stdout)

args = build_argparser().parse_args()

model_xml = args.model

model_bin = os.path.splitext(model_xml)[0] + ".bin"

log.info("Creating Inference Engine...")

ie = IECore()

if args.cpu_extension and 'CPU' in args.device:

ie.add_extension(args.cpu_extension, "CPU")

# Read IR

log.info("Loading network files:\n\t{}\n\t{}".format(model_xml, model_bin))

net = IENetwork(model=model_xml, weights=model_bin)

if "CPU" in args.device:

supported_layers = ie.query_network(net, "CPU")

not_supported_layers = [l for l in net.layers.keys() if l not in supported_layers]

if len(not_supported_layers) != 0:

log.error("Following layers are not supported by the plugin for specified device {}:\n {}".

format(args.device, ', '.join(not_supported_layers)))

log.error("Please try to specify cpu extensions library path in sample's command line parameters using -l "

"or --cpu_extension command line argument")

sys.exit(1)

img_info_input_blob = None

feed_dict = {}

for blob_name in net.inputs:

if len(net.inputs[blob_name].shape) == 4:

input_blob = blob_name

elif len(net.inputs[blob_name].shape) == 2:

img_info_input_blob = blob_name

else:

raise RuntimeError("Unsupported {}D input layer '{}'. Only 2D and 4D input layers are supported"

.format(len(net.inputs[blob_name].shape), blob_name))

assert len(net.outputs) == 1, "Demo supports only single output topologies"

out_blob = next(iter(net.outputs))

log.info("Loading IR to the plugin...")

exec_net = ie.load_network(network=net, num_requests=2, device_name=args.device)

# Read and pre-process input image

n, c, h, w = net.inputs[input_blob].shape

if img_info_input_blob:

feed_dict[img_info_input_blob] = [h, w, 1]

if args.labels:

with open(args.labels, 'r') as f:

labels_map = [x.strip() for x in f]

else:

labels_map = None

cur_request_id = 0

is_async_mode = False

render_time = 0

frame=cv2.imread(args.input)

frame_h, frame_w = frame.shape[:2]

inf_start = time.time()

in_frame = cv2.resize(frame, (w, h))

in_frame = in_frame.transpose((2, 0, 1)) # Change data layout from HWC to CHW

in_frame = in_frame.reshape((n, c, h, w))

feed_dict[input_blob] = in_frame

exec_net.start_async(request_id=cur_request_id, inputs=feed_dict)

if exec_net.requests[cur_request_id].wait(-1) == 0:

inf_end = time.time()

det_time = inf_end - inf_start

# Parse detection results of the current request

res = exec_net.requests[cur_request_id].outputs[out_blob]

for obj in res[0][0]:

# Draw only objects when probability more than specified threshold

if obj[2] > args.prob_threshold:

xmin = int(obj[3] * frame_w)

ymin = int(obj[4] * frame_h)

xmax = int(obj[5] * frame_w)

ymax = int(obj[6] * frame_h)

print(xmax,xmin,ymax,ymin)

class_id = int(obj[1])

# Draw box and label\class_id

color = (min(class_id * 12.5, 255), min(class_id * 7, 255), min(class_id * 5, 255))

cv2.rectangle(frame, (xmin, ymin), (xmax, ymax), color, 2)

det_label = labels_map[class_id] if labels_map else str(class_id)

cv2.putText(frame, det_label + ' ' + str(round(obj[2] * 100, 1)) + ' %', (xmin, ymin - 7),

cv2.FONT_HERSHEY_COMPLEX, 0.6, color, 1)

# Draw performance stats

inf_time_message = "Inference time: N\A for async mode" if is_async_mode else \

"Inference time: {:.3f} ms".format(det_time * 1000)

render_time_message = "OpenCV rendering time: {:.3f} ms".format(render_time * 1000)

async_mode_message = "Async mode is on. Processing request {}".format(cur_request_id) if is_async_mode else \

"Async mode is off. Processing request {}".format(cur_request_id)

cv2.putText(frame, inf_time_message, (15, 15), cv2.FONT_HERSHEY_COMPLEX, 0.5, (200, 10, 10), 1)

cv2.putText(frame, render_time_message, (15, 30), cv2.FONT_HERSHEY_COMPLEX, 0.5, (10, 10, 200), 1)

cv2.putText(frame, async_mode_message, (10, int(frame_h - 20)), cv2.FONT_HERSHEY_COMPLEX, 0.5,

(10, 10, 200), 1)

render_start = time.time()

cv2.imshow("Detection Results", frame)

render_end = time.time()

render_time = render_end - render_start

cv2.waitKey(1)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Yangzhen Wong,

We suspect the performance variation is due to conversion problems from TensorFlow to Intermediate Representation (IR).

Hence, I would suggest you try adding the --reverse_input_channels parameter in your Model Optimizer conversion command.

More information is available at the following pages :

Also, I would encourage you to try out Intel® Distribution of OpenVINO™ Toolkit version 2020.3 LTS, which is a vastly improved version with latest features and leading performance.

Regards,

Munesh

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page