- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For GNA porting task, we have ported our net to GNA hardware and confirmed that our net could infer with GNA.

Now we try to evaluate the performance by comparing Openvinio GNA inference with Pytorch inference.

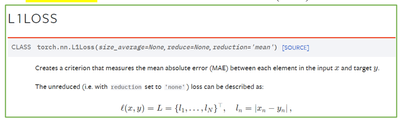

Two metrics‘L1’&‘Cosine similarity’ are used to evaluate the performance.

#{Metric}

The fist metric‘L1’ can measure the mean absolute error(MAE) for two vectors.

(https://pytorch.org/docs/stable/generated/torch.nn.L1Loss.html)

Since Pytorch inference precision is 32-bit floating and precision of GNA inference is a mix of 8-bit,16-bit and 32-bit integer computations, we don’t know the level of L1 loss at best condition.

Based on the above reason, we try to observe the vector similarity by the second metric‘Cosine similarity’.

(https://pytorch.org/docs/stable/generated/torch.nn.CosineSimilarity.html)

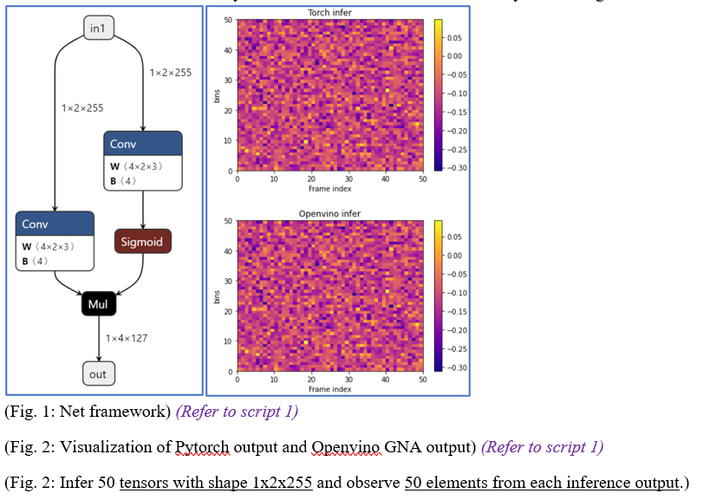

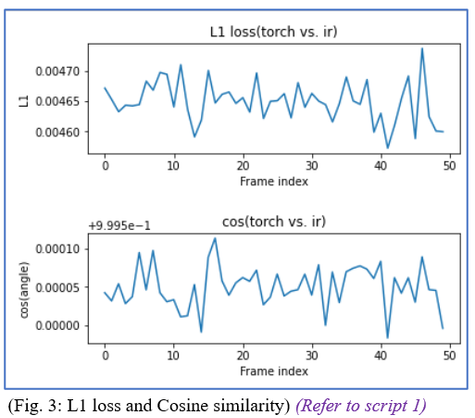

#{Experiment 1}

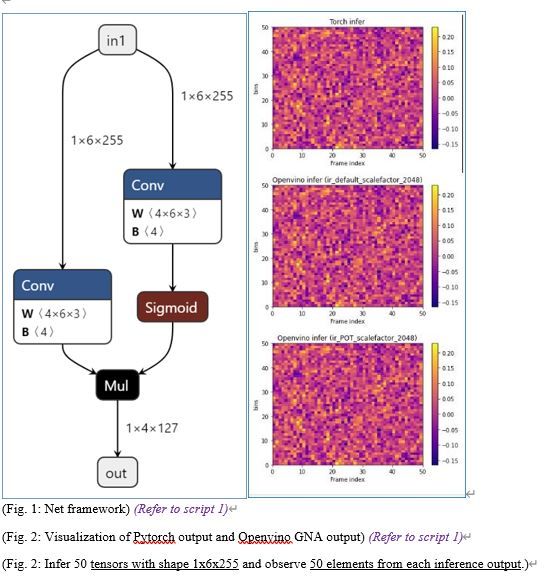

In the 1st experiment, we use this two metrics to evaluate our net’s partial operation‘convolution’, and compare Openvinio GNA inference with Pytorch inference.

The L1 loss level is about ten to the negative 3 power(n × 10^(-3) ). (Refer to Fig.1)

About L1 loss, this level may be the best condition.

The Cosine similarity level is about 0.995. (Refer to Fig.3)

About Cosine similarity, this level may show Openvinio GNA inference is similar to Pytorch inference.

Based on this Cosine similarity result, we can observe the similarity on the Fig.2.

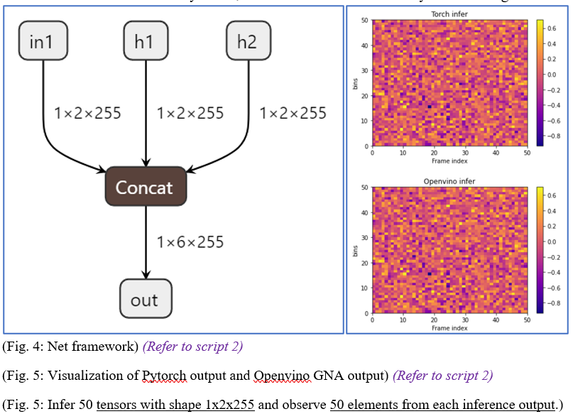

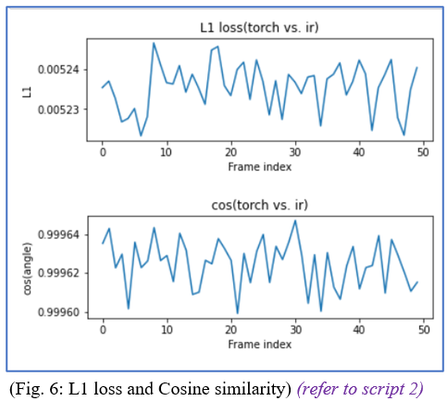

#{Experiment 2}

In the 2nd experiment, we use this two metrics to evaluate our net’s partial operation ‘concatenation’, and compare Openvinio GNA inference with Pytorch inference.

The L1 loss level is about ten to the negative 3 power (n × 10^(-3) ). (Refer to Fig.6)

About L1 loss, this level may be the best condition.

The Cosine similarity level is about 0.999. (Refer to Fig.6)

About Cosine similarity, this level may show Openvinio GNA inference is similar to Pytorch inference.

Based on this Cosine similarity result, we can observe the similarity from the Fig.5.

#{Experiment 3}

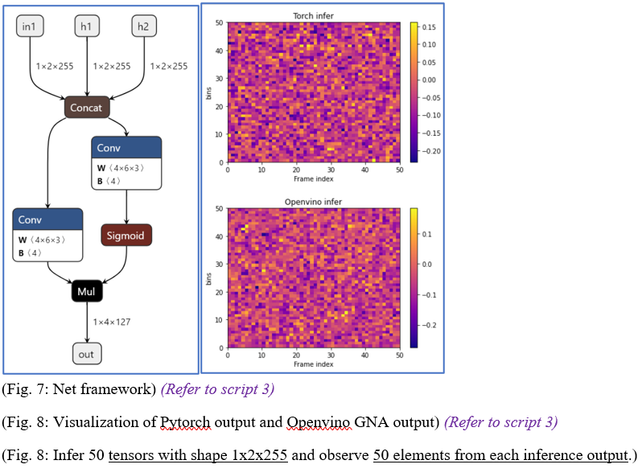

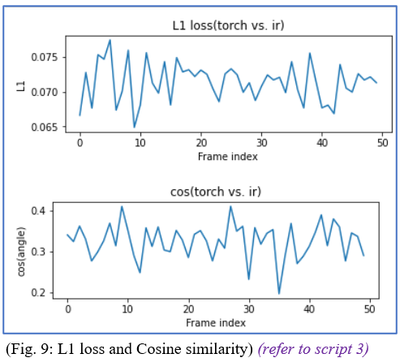

In the 3rd experiment, we use this two metrics to evaluate our net’s partial operation ‘concatenation&convolution’, and compare Openvinio GNA inference with Pytorch inference.

The L1 loss level is about ten to the negative 2 power (n × 10^(-2) ). (Refer to Fig.9)

About L1 loss, this level may be the best condition.

The Cosine similarity level is about 0.3. (Refer to Fig.9)

About Cosine similarity, this level may show Openvinio GNA inference is dissimilar to Pytorch inference.

Based on this Cosine similarity result, we can observe the similarity from the Fig.8.

From experiment 1&2, we can find that ‘net1 with concatenation’ and ‘net2’ with ‘convolution’ have good Cosine similarity.

Since Cosine similarity is close to 1, we can make a conclusion that GNA inference is similar to Pytorch inference.

From experiment 3, we can find that ‘net3 with concatenation & convolution’ has bad Cosine similarity.

Since Cosine similarity is not close to 1, we can make a conclusion that GNA inference is dissimilar to Pytorch inference.

The 1st question is that we are not sure if our thought about experiment 3 is correct.

If our thought about experiment 3 is correct, we guess that GNA inference of ‘net3’ is incorrect.

The 2nd question is that there is any solution to improve the Cosine similarity.

Since the difference between GNA inference (int16) and Pytorch inference (float 32) may come from quantization or GNA bugs, the 3rd question is that do you have other better way evaluate the correctness of GNA inference.

You may find our experiment data from the link below:

script 1: link /20220824_GNA_issue_vector_smiliarity_drops_after_concat_and_convolution/ TEST1_NNExport_IR_Conv_ScaleFactor2048_GNA_L1_eN3.html

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi tea6329714,

Thanks for reaching out.

We are still checking on this. For now, I able to run the experiments and obtain the similar results as you.

In a meanwhile, could you please share the following information with us?

· Operating system

· Hardware specifications

Regards,

Peh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Peh,

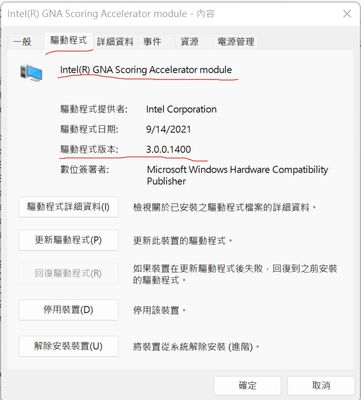

Here are my computer's information:

- Operation system: Windows 11 enterprise

- Hardware informaiton: CPU & GNA

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi tea6329714,

Sorry for my late response.

We have replicated your experiments and noticed that Experiment #1 may have different input shape compared to Experiment #3. Our findings indicated that different inputs for convolutions may contribute the results that you are obtaining.

Could you please have a try to repeat Experiment #1 with similar 1x6x255 inputs and 1x4x127 output? And then observe and share the cosine similarity with us. The output channels for convolutions must be multiple of 4. You can refer to GNA Device for more information.

Due to specifics for hardware architecture, Intel GNA supports a limited set of operations, their kinds, and combinations. It might expect that GNA inference and Pytorch inference may not provide similar cosine similarity. More information from Supported Devices.

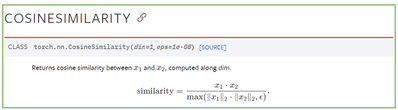

Besides, we encourage to use Post-training Optimization Tool (POT) to get a model with quantization hints based on statistics for the provided dataset.

Regards,

Peh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Peh,

Sorry for my late response.

There exit little time for me to do experiments, since I have to pay attention to my job.

The below link includes the experiment "Experiment #1 with similar 1x6x255 inputs and 1x4x127 output" & POT experiments.

However, the data from the link only includes "source code" & "the result of executing".

And I will show my thoughts, observations and question 2 days latter.

You may find our experiment data from the link below:

script 1A: "Experiment #1 with similar 1x6x255 inputs and 1x4x127 output"

link /20220922_GNA_issue_vector_smiliarity_drops_after_concat_and_convolution/ TEST1B_NNExport_IR_Conv_CIn6_Cout4_ScaleFactor2048_GNA_L1_eN4.html

script 1B: "Experiment #1 with similar 1x6x255 inputs and 1x4x127 output" with POT

link /20220922_GNA_issue_vector_smiliarity_drops_after_concat_and_convolution/ TEST1B_NNExport_IR_Conv_CIn6_Cout4_ScaleFactor2048_GNA_L1_eN4.html

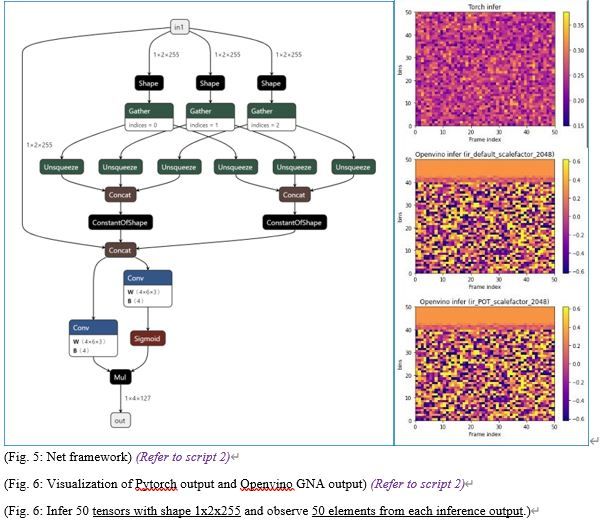

script 2A: "Concatenation & convolution"

link /20220922_GNA_issue_vector_smiliarity_drops_after_concat_and_convolution/ TEST5_NNExport_IR_SelfCatConv_ScaleFactor2048_CPU_L1_eN1.html

script 2B: "Concatenation & convolution" with POT

link /20220922_GNA_issue_vector_smiliarity_drops_after_concat_and_convolution/ TEST5B_POT_IR_SelfCatConv_ScaleFactor2048_GNA_L1_eN1.html

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There are 4 experiments below can help use to us clarify the causes of dropped Cosine similarity.

The aim of experiment1 & experiment2 is to observe whether the cause of issue comes from convolution’s input channel of 6.

The aim of experiment3 & experiment4 is to observe whether the cause of issue comes from quantization of precision transforming (float32 to integer16&8).

#___________________________________________________________________________________

- Experiment 1. Observation of Net 1

- Convolution (CIn:6, Cout:4, knernel:3) : input tensor:1x6x255 è output tensor:1x4x127

- Experiment 2. Observation of Net 2

- Concatenation : input tensor:1x2x255 è output tensor:1x6x255

- Convolution (CIn:6, Cout:4, knernel:3) : input tensor:1x6x255 è output tensor:1x4x127

- Experiment 3. Observation of Net 1 with POT

- Experiment 4. Observation of Net 2 with POT

#___________________________________________________________________________________

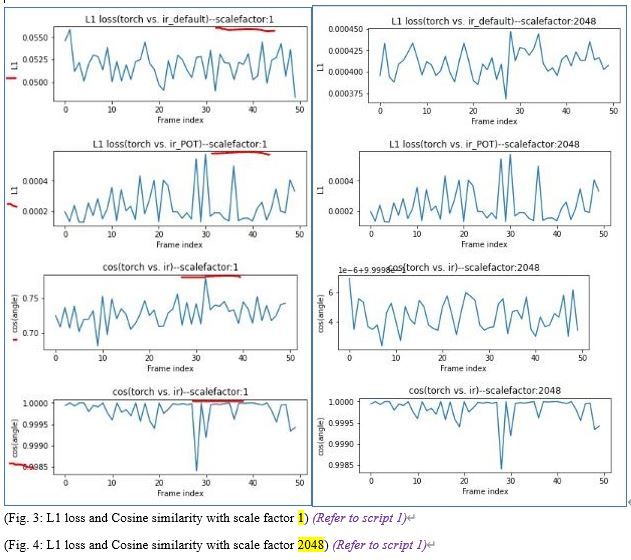

#{Experiment 1 &3}______________________________________________________________________________________________

>>(Default net)In the 1st experiment, we use this two metrics to evaluate our default net’s partial operation ‘convolution’, and compare Openvinio GNA inference with Pytorch inference.

>>(POT net)In the 3rd experiment, we use this two metrics to evaluate our POT net’s partial operation ‘convolution’, and compare Openvinio GNA inference with Pytorch inference.

About Cosine similarity(based onFig4.), this level may show Openvinio GNA inference is similar to Pytorch inference.

Based on this Cosine similarity result, we can observe the similarity on the Fig.2.

#{Experiment 1 &3}

<< (Default net with scale factor 1) The L1 loss level is about ten to the negative 2 power (n × ).

<< (POT net with scale factor 1) The L1 loss level is about ten to the negative 4 power (n × ).

<< (Default net with scale factor 2048) The L1 loss level is about ten to the negative 2 power (n × ).

<< (POT net with scale factor 2048) The L1 loss level is about ten to the negative 4 power (n × ).

>> About the above result, I think POT help us to set correct scale factor so that L1 performance is well.

<< (Default net with scale factor 1) The Cosine similarity level is about 0.75.

<< (POT net with scale factor 1) The Cosine similarity level is about 0.9999.

<< (Default net with scale factor 2048) The Cosine similarity level is about 0.9999.

<< (POT net with scale factor 1) The Cosine similarity level is about 0.9999.

>> About the above result, I think POT help us to set correct scale factor so that similarity performance is well.

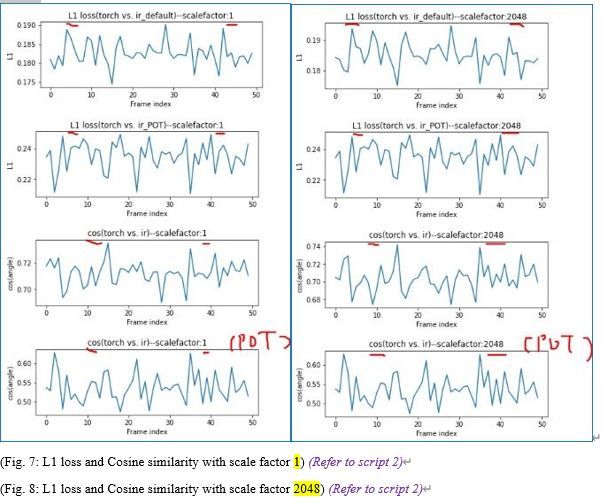

#{Experiment 2 &4}______________________________________________________________________________________________

>> (Default net) In the 2nd experiment, we use this two metrics to evaluate our default net’s partial operation ‘concatenation & convolution’, and compare Openvinio GNA inference with Pytorch inference.

>> (POT net) In the 4th experiment, we use this two metrics to evaluate our POT net’s partial operation ‘concatenation & convolution’, and compare Openvinio GNA inference with Pytorch inference.

About Cosine similarity (based onFig8.), this level may show Openvinio GNA inference is dissimilar to Pytorch inference.

Based on this Cosine similarity result, we can observe the similarity on the Fig.6.

#{Experiment 2 &4}

<< (Default net with scale factor 1) The L1 loss level is about ten to the negative 1 power (n × ).

<< (POT net with scale factor 1) The L1 loss level is about ten to the negative 1 power (n × ).

<< (Default net with scale factor 2048) The L1 loss level is about ten to the negative 1 power (n × ).

<< (POT net with scale factor 2048) The L1 loss level is about ten to the negative 1 power (n × ).

>> About the above result, I think that bad L1 doesn’t come from quantization so that POT can’t help.

<< (Default net with scale factor 1) The Cosine similarity level is about 0.75.

<< (POT net with scale factor 1) The Cosine similarity level is about 0.60.

<< (Default net with scale factor 2048) The Cosine similarity level is about 0.74.

<< (POT net with scale factor 1) The Cosine similarity level is about 0.60.

>> About the above result, I think that bad similarity doesn’t come from quantization so that POT can’t help.

- Thoughts & Questions:

- From experiment 1&3, we can find that ‘net1 with convolution of channel 6’ have good Cosine similarity.

- From experiment 1, we can find that different scale factor (1&2048) will affect L1& Cosine similarity. (Quantization issue)

- From experiment 3, we can find that POT can learn and set a proper scale factor for user so that the performance of net is well even through we set different scale factors.

- From experiment 2&4, we can find that ‘net2 with concatenation & convolution’ have bad Cosine similarity.

- From experiment 2, we can find that different scale factor (1&2048) will not affect L1& Cosine similarity. (Quantization issue)

<< It isn’t reasonable because different scaling factor may have different performance.

- From experiment 3, we can find that POT can’t help the condition of dropped Cosine similarity.

The 1st question is that we are not sure if our POT is correct.

>>If our POT is correct, we guess that GNA inference of ‘net2’ is incorrect (there exists a bug as we do concatenation & convolution?).

You may find our experiment data from the link below:

script 1A: "Experiment #1 with similar 1x6x255 inputs and 1x4x127 output"

link /20220922_GNA_issue_vector_smiliarity_drops_after_concat_and_convolution/ TEST1B_NNExport_IR_Conv_CIn6_Cout4_ScaleFactor2048_GNA_L1_eN4.html

script 1B: "Experiment #1 with similar 1x6x255 inputs and 1x4x127 output" with POT

link /20220922_GNA_issue_vector_smiliarity_drops_after_concat_and_convolution/ TEST1B_NNExport_IR_Conv_CIn6_Cout4_ScaleFactor2048_GNA_L1_eN4.html

script 2A: "Concatenation & convolution"

link /20220922_GNA_issue_vector_smiliarity_drops_after_concat_and_convolution/ TEST5_NNExport_IR_SelfCatConv_ScaleFactor2048_CPU_L1_eN1.html

script 2B: "Concatenation & convolution" with POT

link /20220922_GNA_issue_vector_smiliarity_drops_after_concat_and_convolution/ TEST5B_POT_IR_SelfCatConv_ScaleFactor2048_GNA_L1_eN1.html

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi tea6329714,

Thanks for sharing your validation results and thoughts.

We will check on this and get back to you at the earliest.

Sincerely,

Peh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi tea6329714,

Thank you for your patience.

As Peh mentioned, GNA supports a limited set of operations, kinds and combination. Accuracy issues in GNA has several intrinsic sources of inaccuracy such as PWL approximation for activation functions and integer-only math.

This case is considered as new models enabling and we have submitted this feature request to engineering for further assessment. Refer release notes on future implementation.

Regards,

Ken

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi tea6329714,

Thank you for your question. If you need any additional information from Intel, please submit a new question as this thread is no longer being monitored.

Regards,

Peh

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page