- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

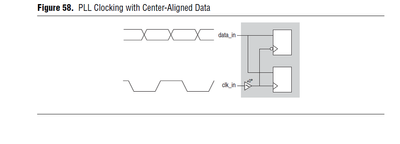

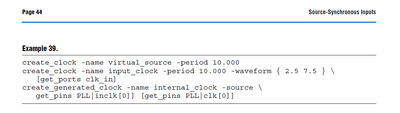

based on AN433 page 43~44.

Question 1:

We define both virtual clock and input clock here.

I think input clock is enough for timing constraint.

Why need both?

Question 2:

We define both input clock and generated clock after pll.

Then we which one should be used for define set_input_delay for data_in?

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

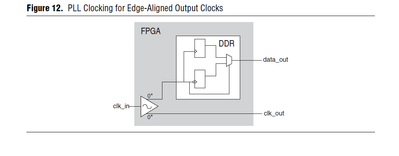

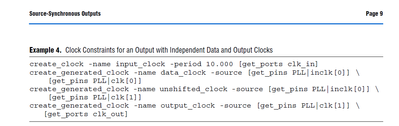

based on AN433 page 8~9.

Question 3:

why we need to create unshifted_clock and output_clock in 2 step?

I am not sure what's the purpose of unshifted_clock definition here.

Can we do it as below in 1 step?

create_generated_clock -name out_clock -source [get_pins PLL|inclk[0]] [get_ports clk_out]

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Question 4:

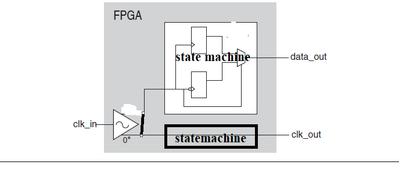

I used state machine get output data like below to external spi device.

clk_in = 25MHz -> pll_out = 50MHz -> clk_out = 6.25MHz.

actually, I used counter to get frequency down to 6.25MHz, and output to external device as spi clock.

How can define such kind sdc?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

(1) Virtual and input clock constraints needed to define launch and latch edges for the analysis.

(2) set_input_delay always references the virtual clock, not any of the input or internal clocks.

(3) Just use derive_pll_clocks. The example is just showing that the output clock constraint needs to source the correct PLL output clock pin.

(4) So you're using the 50 MHz clock from the PLL as the source for the 6.25 MHz output clock? Just use the last constraint you referenced in (3) but include a frequency relationship (-divide_by 8).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Question 1) ~2):

Please refer to AN 433: Constraining and Analyzing Source-Synchronous Interfaces (intel.cn)

Page 41: It is not necessary to use a virtual clock to constrain the input delays. You can create

input delay constraints relative to the input clock instead of the virtual clock, but

using a virtual clock makes the constraining of the interface easier and more accurate.

So, the documents say it's not a must to use virtual clock. But you told set_input_delay must use virtual clock.

I am not sure when I need to use virtual clock, and when I don't need to create virtual clock.

what's the relationship between virtual clock and data input clock?

I also don't see virtual clock used for any set_output_delay.

Question 3):

Yes, derive_pll_clocks is another method should work.

But my question is if I use the method of the example, is create unshifted_clock a must? I don't see it use anywhere later.

Can I remove the create_generated_clock of unshifted_clock? Because we only care about the clk_out for set_output_delay.

I think we only need to tell fpga the external constraints.

Question 4):

You mean like this:

create_generated_clock -name out_clock -source [get_pins PLL|inclk[1]] -divide_by 8 [get_ports clk_out]

set_output_delay -add_delay -clock [get_clocks {clk_out}] -max 10.000 [get_ports {data_out}]

But such sdc will report error.

Node: nios:nios_0|mcu_uadc:mcu_uadc_0|read_cnt[2] was determined to be a clock but was found without an associated clock assignment.

No paths exist between clock target "uadc_sclk" of clock "uadc_sclk" and its clock source. Assuming zero source clock latency.

Here is the exact code in my project. uadc_sclk is the clk_out.

always @(negedge clk or negedge reset_n) begin

if(reset_n == 1'b0)

write_cnt <= 8'd0;

else if(spi_current_state == SPI_STATE_WRITE)

write_cnt <= write_cnt + 8'd1;

else

write_cnt <= 8'd0;

end

always @(negedge clk or negedge reset_n) begin

if(reset_n == 1'b0)

read_cnt <= 8'd0;

else if(spi_current_state == SPI_STATE_READ)

read_cnt <= read_cnt + 8'd1;

else

read_cnt <= 8'd0;

end

assign uadc_sclk = (write_cnt[7:3] >= 5'd4 && write_cnt[7:3] <= 5'd27 && write_cnt[2:0] <= 3'd3)? 1'b1 :

(read_cnt[7:3] >= 5'd4 && read_cnt[7:3] <= 5'd27 && read_cnt[2:0] <= 3'd3)? 1'b1 : 1'b0;

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

(1) For synchronous I/O, there's always a virtual clock and it should always be referenced for set_input_delay and set_output_delay. For source synchronous I/O, there's only a virtual clock for inputs since the FPGA generates the output clock that will latch the data. So set_input_delay references the virtual clock that drives the "upstream" device driving into the FPGA while set_output_delay for source synch always references the output generated clock coming from the FPGA itself.

(3) Again, it's just an example. You use the clocks you need. The easiest thing to do is what I said: use derive_pll_clocks and then create_generated_clock sourced from the appropriate output pin of the PLL.

(4) No. The -source for create_generated_clock should be the output pin of the PLL, not the input pin. And your set_output_delay is wrong because the -clock is referencing "clk_out" when you named the output clock "out_clock". So this should be:

create_generated_clock -name out_clock -source [get_pins PLL|clk[<output clock pin>]] -divide_by 8 [get_ports clk_out]

set_output_delay -add_delay -clock [get_clocks {out_clock}] -max 10.000 [get_ports {data_out}]

As for your error, I've never seen double "?" checks for a single assignment. I'm not sure what you're trying to do with this so it's not clear why read_cnt[2] is being seen as a clock. But that's not related to the constraints you've shown.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

1) So you mean the virtual clock is a must for set_input_delay and set_output_delay, and intel documents AN433 is wrong.

From above intel document AN433, you also can see it doesn't use any virtual clock for set_output_delay.

But how does fpga know the relationship between virtual clock and actual input clock & output clock?

4) You can see above my verilog code for uadc_sclk. it's generated by counter of read_cn and write_cnt to make it frequency divided from pll clock 50MHz , down to 6.25MHz. But I don't know why timing analyzer report such error related to read_cnt.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

(1) Again, as I said, for source synchronous outputs, there is no virtual clock. For regular synchronous outputs, yes there is a virtual clock, the clock that drives the "downstream" device. But since you're talking about source synchronous outputs, there is no virtual clock.

"But how does fpga know the relationship between virtual clock and actual input clock & output clock?"

I don't understand this question unless you separate into inputs and outputs. For source synchronous inputs, the virtual clock is the launch clock and the clock that drives the input registers (either direct clocking from upstream device or clock processed through a PLL) is the latch clock. You define this clock relative to the virtual clock (same edge or opposite edge requiring a phase shift). For source synchronous outputs, the launch clock is the clock that drives the output registers and the latch clock is the output generated clock.

(4) No idea what's happening here. All I can say is that from the error message, something is happening with read_cnt[2] somewhere where the tool is seeing it as a clock. Make sure you're not accidentally using this signal elsewhere in your design. You can also search the RTL Viewer to see if you can see where read_cnt[2] is going into the clock input of some logic somewhere.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

(1) What's the difference of source synchronous outputs and regular synchronous outputs?

For example, SPI bus, so this is source synchronous outputs, no need virtual clock.

Uart, there is no register clock output, need use virtual clock.

Is my understanding right?

"But how does fpga know the relationship between virtual clock and actual input clock & output clock?"

I mean physically, all the timing constraints should refer to real clock. So if we define input timing constraint based on virtual clock,

we need to define the relationship between virtual clock and real input clock. if not defined, how can fpga knows which input pin & register should meet such timing constraints? So I am confused with set_input_delay based on virtual clock, not based on real input clock.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Additionally, for I2C bus, it can be both input and output, how to define timing constraint for it?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Synchronous I/O means that an external clock source (or 2 separate sources sometimes) are used to drive both the source and destination device. Source synchronous means there is a clock that drives the source device and then the source device provides the clock to the destination device to latch the data.

I think you're also misunderstanding the meaning of a virtual clock. A virtual clock is still a real clock. It's just that it never actual enters the FPGA. Even though it never enters the FPGA, timing constraints (create_clock with no target specified) still need to describe its properties. Then, a create_clock command (with a target specified, usually the clock input port of the device) defines the relationship between the clock that actually enters the FPGA and the virtual clock. Having set_input_delay reference the virtual clock establishes the launch edge for an input analysis. The tool knows what clock domain drives the input latching registers of the FPGA so the latch edge is determined automatically simply by having set_input_delay target particular inputs.

As for bidirectional interfaces, you would have set_input_delay and set_output_delay constraints applied to the same I/O.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your patience! I think I am close to the answer now.

> for input, external device has their own clock, so we need to use virtual clock for timing constraint.

> for output, for example, FPGA as SPI master to external device. The clock is provided by fpga, so there is no need to use virtual clock.

> for output, for example, FPGA as SPI slave to external device. The clock is provided by external device, so we need to use virtual clock.

> for uart communication, it's asynchronous communication, there is no clock. So we don't need to set any timing contraints.

Please check if my above understanding is right or not. Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

You never need to not create timing constraints. For asynchronous interfaces, you'd probably be using false path exceptions.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

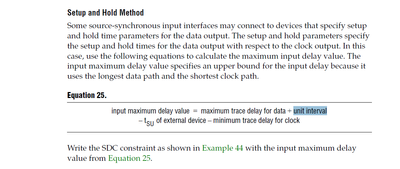

One more question for AN433 page46.

what's the unit interval here?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks Strell for sharing your knowledge. Intel support community appreciated your help.

I’m glad that your question has been addressed, I now transition this thread to community support. If you have a new question, feel free to open a new thread to get the support from Intel experts. Otherwise, the community users will continue to help you on this thread. Thank you.

regards,

Farabi

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page