- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I'm trying to match the CBOX of a Skylake SP Platinum 8175M CPU with the logical core.

So I Started a simple Standard memcpy benchmark (A[I] = B[I]) with 24 Threads. I configured all 24 CBOX of the CPU with the LLC_LOOKUP_WRITE event and set the State field to "0x1F". Then I configured the TID (for example to "0xB0") to read only the traffic from that specific core.

I made this measurements 24 Times with 24 different TIDs, to match the traffic from all Cores to a CBOX.

On every CBOX I measured some traffic, but always a huge higher Traffic on one CBOX. My result is, that CBOX0 matches to Core 0 and CBOX1 to Core 1 and so on.

Does this result makes sense?

Thanks

Ferdi

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Intel has not published any descriptions of how user-visible Core and Uncore unit numbers are related to positions on the chip.

The best information I am aware of is some preliminary research I presented in 2018:

The specifics for the 24-slice (first-generation) Xeon Platinum 8160 are presented in slides 12-17, with some extra slides in the backup (slides 38-42).

Across our 3472 Xeon Platinum 8160 processors, we have just over 100 unique topologies -- each associated with a different pattern of disabled tiles. (In these processors, there are exactly 4 disabled cores and exactly 4 disabled L3 slices, and they are always co-located. In other processor models there can be more disabled cores than disabled L3 slices. I assume that all enabled cores are co-located with an enabled L3 slice, but I have not tested all possible configurations.).

One symmetry that is observed is that an equal number of "tiles" (core + co-located L3 slice) are disabled on the "left" and "right" halves of the chip. This makes supporting "sub-NUMA cluster" (SNC) mode much easier -- you can always split the chip down the middle and know that there will be the same numbers of cores on each side.

The procedure I used in the presentation above is not easy to automate, but there are some pieces that should be straightforward. Slide 13 describes a methodology using the uncore mesh traffic counters to determine which CHA number is co-located with a particular CPU number. Those results have been generally unambiguous in my testing. It would probably be relatively easy to automate testing the presentation's hypothesis on how the bits of CAPID6 are mapped to locations on the die (slide 16).

It is not clear to me whether additional layers of indirection between the locations on the die and the user-visible numbers are available for the BIOS to play with. I am confident that the results I obtained on my nodes are persistent on my nodes, but I have not tested whether activating sub-NUMA clustering may change the results, or whether different BIOS versions (e.g., from different vendors) might present different mappings.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Dear Dr. Bandwidth,

Thanks for your answer. I‘ve already seen your presentation.

Can you please tell me which uncore mesh traffic counter you used? That would be really helpful for me.

Best regards

Ferdinand

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I used four events for most of this testing:

- HORZ_RING_BL_IN_USE.LEFT_ALL (Event 0xab, Umask 0x03)

- HORZ_RING_BL_IN_USE.RIGHT_ALL (Event 0xab, Umask 0xc0)

- VERT_RING_BL_IN_USE.UP_ALL (Event 0xaa, Umask 0x03)

- VERT_RING_BL_IN_USE.DOWN_ALL (Event 0xaa, Umask 0x0c)

Important things to remember:

- Routing is Y first, then X

- Traffic goes through disabled tiles -- not around them

- The meaning of "left" and "right" are reversed in every other column.

- Two increments per cache line

- The counters increment for data arriving at the chip (whether stopping at the chip or passing through), and not for data sourced from the chip. This also includes data that is changing directions in a tile -- it is counted at the input side (up or down), but not counted at the output (left or right).

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I have to apologize for my late answer, unfortunately I was very busy.

Your answer was very helpful for me.

Is it possible that in the Intel® Xeon® Processor Scalable Memory Family Uncore Performance Monitoring Documentation is an error in the definition of VERT_RING_BL_IN_USE ?

It says there:

We really have two rings -- a clockwise ring and a counter-clockwise ring. On the left side of the ring, the "UP" direction is on the clockwise ring and "DN" is on the counter-clockwise ring. On the right side of the ring, this is reversed. The first half of the CBos are on the left side of the ring, and the 2nd half are on the right side of the ring. In other words (for example), in a 4c part, Cbo 0 UP AD is NOT the same ring as CBo 2 UP AD because they are on opposite sides of the ring.

Is it possible that the ring definition is still from the predecessors of the Skylake series?

With my results it makes no sense that the counters on the right side point in the opposite direction as the counters on the left side.

In my results it would be logically, that the Up event on both sides of the Ring, really measure in the Up Direction.

Have a good day

Ferdi

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The wording about ring directions in the SKX uncore performance monitoring guide is clearly left over from earlier generations -- I only see one word that has changed since the Xeon E5 (v1) Sandy Bridge EP generation (and the description might not have been correct then either!)

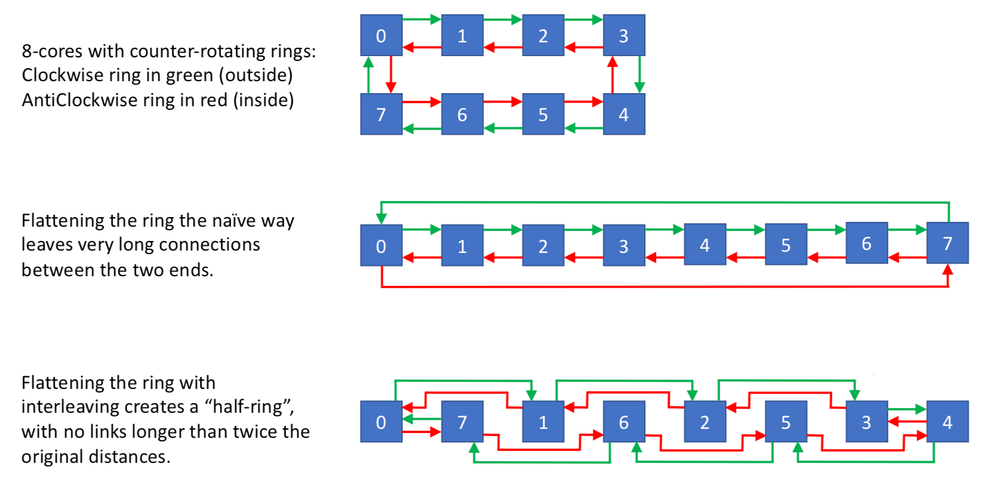

One place that rings get fairly tricky is when the "squashing" a ring into a "half-ring", as shown in the attached figure...

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks for your answer.

did I understand it right, that it is possible to measure the traffic one one Ring in both directions?

For example on the green Ring from Tile 0 to 1 and also on the green Ring form 1 to 0?

May I ask where you got the graphics from?

Hopefully Intel will update the Documentation soon, it would be really helpful for beginners understanding.

Ferdi

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The graphics came from my mad powerpoint skillz..... I was writing up some of this stuff for a technical report anyway.... (I should not have called the interleaved option a "half-ring", since the three versions are topologically identical -- they only differ in the length of the wires.)

The ring busy counters are on the *input* side of each mesh (or ring) stop, so measuring on the clockwise (green) ring at box 1 will measure the traffic from box 0 to box 1. This includes both the traffic continuing through box 1 and the traffic stopping in box 1. Conversely, measuring on the counterclockwise (red) ring at box 0 will tell you the traffic from box 1 to box 0. This includes both the traffic continuing through box 0 and the traffic stopping in box 0.

There is no traffic on the green ring from box 1 to box 0. Most routing algorithms will always take the shorter route: 1 hop on red is much shorter than 7 hops on green. (There are a stupid number of special cases in specific implementations, but at least on the Intel BL ring/bus the traffic always goes by the shortest route in Y, then in X.)

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thanks again for your answer.

Is it right, that I can measure UP_ALL, when I add the results of both Up Events?

Result of: Up_Even (bxxxxxxx1) + Result of:Up_ODD (bxxxxxx1x) = Returns: Up_ALL (bxxxxxx11 = 0x03)

or are the individual UP_even and UP_odd events also from the past?

VERT_RING_BL_IN_USE

|

Extension |

umask [15:8] |

Description |

|

UP_EVEN |

bxxxxxxx1 |

Up and Even |

|

UP_ODD |

bxxxxxx1x |

Up and Odd |

|

DN_EVEN |

bxxxxx1xx |

Down and Even |

|

DN_ODD |

bxxxx1xxx |

Down and Odd |

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

There is no real documentation on "EVEN" and "ODD". Based on Intel's patents, I am guessing that it refers to alternating time slots in the protocol, with different types of transactions having different restrictions on which time slots they can use (or start in).

I tried to find patterns in how the traffic was distributed across EVEN and ODD, but did not gain any insights.

The good news is that you can set both "EVEN" and "ODD" bits in the Umask and get a total count that matches the expected traffic with a single measurement. (This is the same as most Intel performance counter events -- the Umask bits are combined with a logical OR -- increment for UP or DOWN when both are set.)

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page