- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

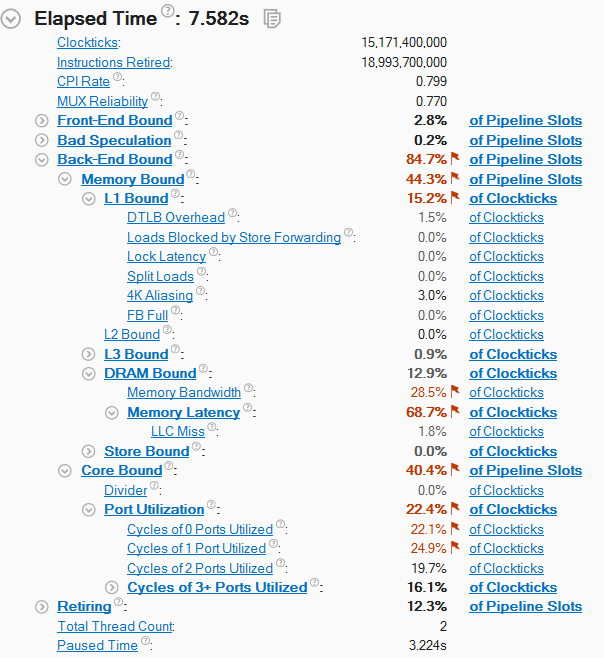

My application processes in a short loop huge amount of data. At now the application is single threaded and I am trying to speed up single threaded as far as possible before moving to multiple thread

General Exploration Summary page says

Elapsed Time: 10.346s

Clockticks: 25,370,400,000

Instructions Retired: 21,055,200,000

CPI Rate: 1.205

MUX Reliability: 0.917

Front-End Bound: 3.6%

Bad Speculation: 0.2%

Back-End Bound: 77.2%

Memory Bound: 46.8%

L1 Bound: 0.0%

L2 Bound: 4.3%

L3 Bound: 0.8%

Contested Accesses: 0.0%

Data Sharing: 0.0%

L3 Latency: 15.3%

SQ Full: 16.2%

DRAM Bound: 32.4%

Memory Bandwidth: 26.2%

Memory Latency: 73.8%

LLC Miss: 76.4%

Store Bound: 0.0%

Store Latency: 21.1%

False Sharing: 0.0%

Split Stores: 0.0%

DTLB Store Overhead: 0.2%

Core Bound: 30.3%

Divider: 0.0%

Port Utilization: 23.5%

Cycles of 0 Ports Utilized: 43.5%

Cycles of 1 Port Utilized: 16.2%

Cycles of 2 Ports Utilized: 12.6%

Cycles of 3+ Ports Utilized: 8.9%

Port 0: 18.4%

Port 1: 16.2%

Port 2: 21.5%

Port 3: 22.2%

Port 4: 3.3%

Port 5: 20.5%

Retiring: 19.0%

General Retirement: 19.0%

FP Arithmetic: 30.6%

FP x87: 0.0%

FP Scalar: 0.0%

FP Vector: 30.6%

Other: 69.4%

Microcode Sequencer: 0.0%

Assists: 0.0%

Total Thread Count: 1

Paused Time: 3.071s

As far as I see the issues is at Back-End, i.e. all instructions are fetched from DRAM by Front-End but CPU idles on stage where these instructions are executed. The code is consecutive, without conditions. I see that the code is DRAM bound and, especially, Memory Latency bound.

Does it mean that it is impossible to speedup the code because I limited by DRAM parameters?

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Does it mean that it is impossible to speedup the code because I limited by DRAM parameters?

No it doesn't. It means that if you'd make data access more sequential (helping HW prefetcher to do its work), then DRAM latency can be decreased and overall performance of the execution will improve. You can use Memory Access analysis for determining which data objects create most of latency.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

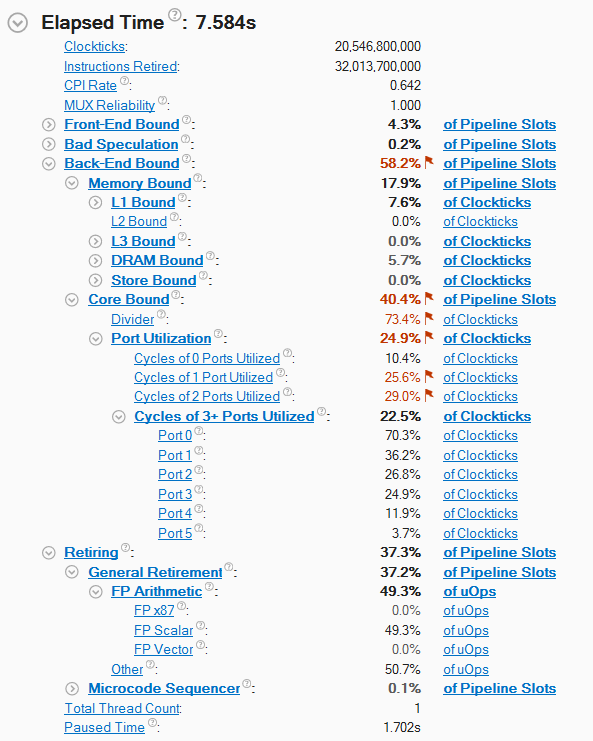

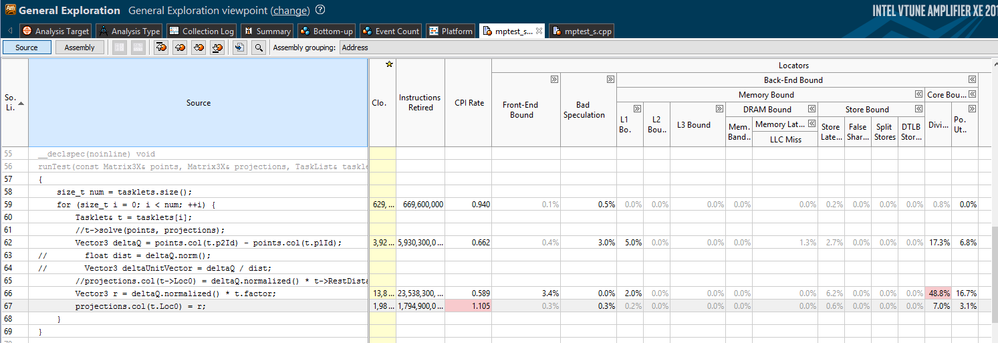

Thank you for the hint, I modified my code and the situation became much better.

It seems the issue now is divider and sqrt operations (I do vector normalization). Is it possible to do anything with this?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

From the profile summary i see that the FP ops are scalar - you could prove it looking at the Assembly view in the Source Viewer. If you manage to make your division operations vectorized, you could have significant improvement in time spent in Core execution unit.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

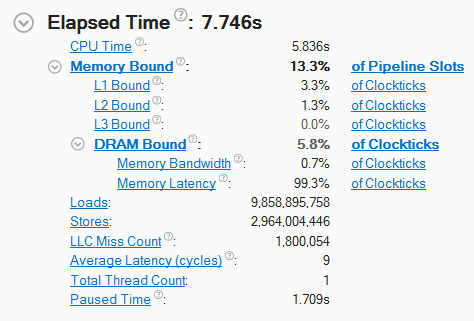

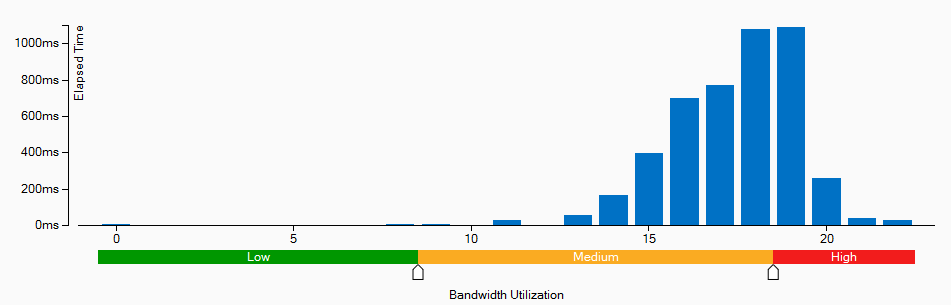

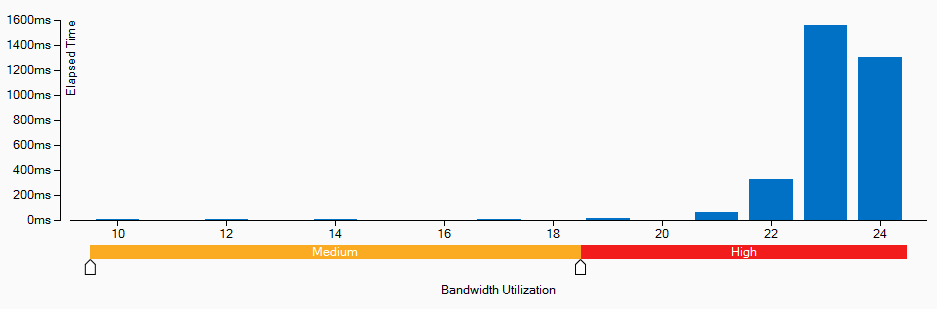

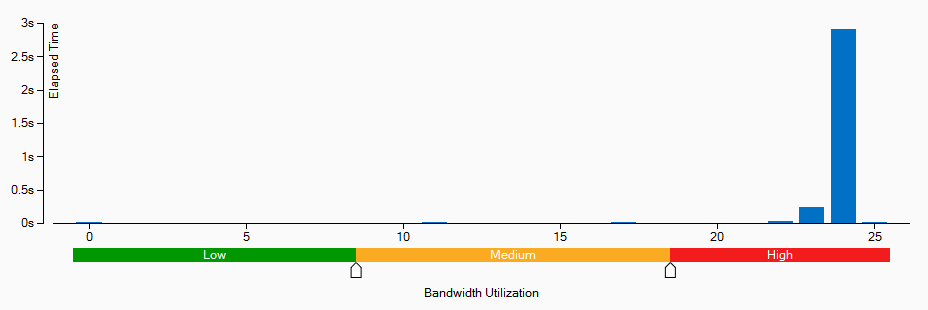

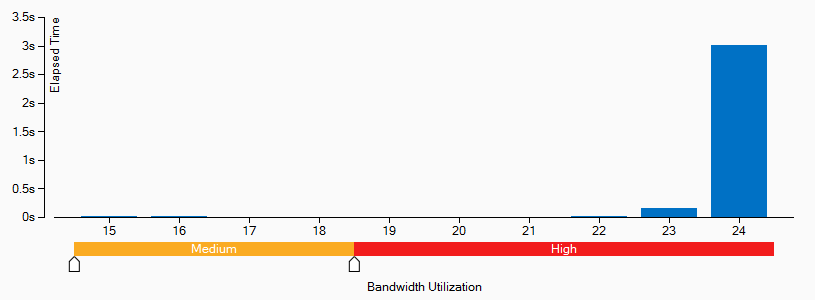

I manage to speedup my by 20% code by using SSE instructions.

DRAM bandwidth utilization is increased also.

Below are charts for 1-, 2-, 3-, 4-threaded versions respectively Almost 100% of time DRAM Bandwidth as about 25GB/sec. Max DRAM System Bandwidth is 27 GB/sec.

Is it possible to say that it is impossible to speedup the code due DRAM limitations?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

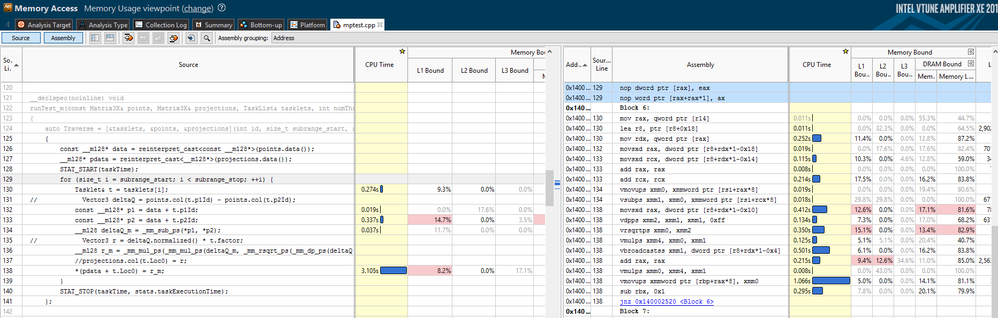

It would be nice if you copy a screenshot of asm view for loop.

With SSE instructions you increased BW requirements and you suffer more from not always contiguous memory access. You might want to focus on that. But anyway, you perf profile looks good already.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

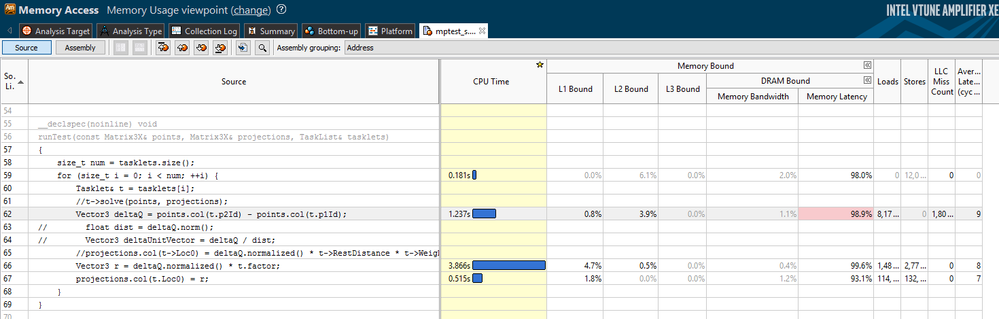

Here is assembly code along with source code. Several comments on the code

- Commented-out lines (131, 135, 137) explain the lines below

- Lines 131, 132: p1 and p2 point on adjacent memory regions (p1 + 1 = p2). In real application it will be not always the case, but for this test it is as I said.

Yes, you are correct: not all memory reads are from contiguous memory areas. The code accesses three memory buffers: 2 for read and one for write. In each iteration the code

- reads 4*4=16 bytes from the first memory buffer (tasklets)

- reads 2*4 * 4 = 32 bytes from the second memory buffer (points)

- writes 4*4 = 16 bytes to the third memory buffer (projections)

Hence provided I am unable to load CPU due DRAM limitations is it the reason to perform computations on GPU?

Thank you

Ayrat

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Assuming your write buffer is not used very frequently, you might want to implement non-temporal store instruction for writing data. This might free cache L1 resources for data load operations. Sometimes the "restrict" keyword against function parameters helps compiler to generate movntps instruction.

As for GPU, I suppose you'll face with even more painful memory latency problems, until you have a small sets of data being handled by massively parallel and quite small execution kernels.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Thank you for the hint!

movtnps (_mm_stream_ps) provided nearly 10% speedup for 1-threaded code and more than 30% speedup for 2-threaded code.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Would you share profiling results and the asm view screenshot?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page