- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Probably a naive question. I am working with Openvino 2022.2. I have downloaded the VGG16 model using omz_downloader. I converted it to IR using the command

omz_converter --name vgg16

When I run a benchmark comparing my CPU and GPU using the following commands

benchmark_app.exe -m C:\Users\Sami\public\vgg16\FP16\vgg16.xml -d CPU

and

benchmark_app.exe -m C:\Users\Sami\public\vgg16\FP16\vgg16.xml -d GPU

I get the following results

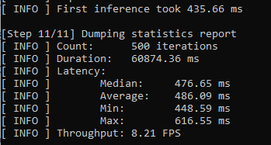

CPU

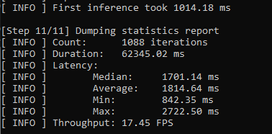

GPU

I am a bit confused about these results. On the CPU the average latency is lower, and the throughput is also lower?

With low latency shouldn't the throughput be higher because it takes less amount of time per result (low latency)

Similarly for the GPU the latency and throughput are both high. Can anyone please explain this

My CPU is Intel 6500T and GPU is Intel HD Graphics 530.

Nothing fancy

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi username_007,

Yes, you’re right. Latency measures the inference time (ms) required to process a single input if inferencing synchronously.

For your information, if running Benchmark App with default parameters, it is inferencing in asynchronous mode. Therefore, the calculated latency measures the total inference time (ms) required to process the number of inference requests.

In addition, 4 inference requests are created if running Benchmark App on CPU with default parameters while running Benchmark App on GPU with default parameters, 16 inference requests are created.

You can re-run Benchmark App by setting the same inference requests when inferencing on CPU and GPU and compare the inference results again.

benchmark_app.exe -m C:\Users\Sami\public\vgg16\FP16\vgg16.xml -d CPU -nireq 4

benchmark_app.exe -m C:\Users\Sami\public\vgg16\FP16\vgg16.xml -d GPU -nireq 4

Regards,

Peh

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi username_007,

This thread will no longer be monitored since we have provided answers. If you need any additional information from Intel, please submit a new question.

Regards,

Peh

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page