- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

I am wondering if IPP 8.0 has any way to efficiently implement the xe(-x^2/a), where a is a positive float number (ipp32f), where x is an array (potential large array) of data type ipp32f. I have used IPP 8.0 to element-wise product of x and e(-x^2/a), where i have used additional function call to compute the power component of the exponent (i.e., -x^2/a ). Due to multiple function calls, the evaluation of the above function becomes the bottleneck. Similar arguments applies to the x / (1 + x^2/a) as well. Is there any function calls respectively that I can directly compute the above two formula?

Best Regards

Edwin

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Edwin,

You can have several function combined to compute the value. For example,

For example, for this function x / (1 + x^2/a) computation, you can use the following function:

x^2: ippsMul_(x,x, y1, ....)

x^2/a: ippsippsDivC(y1,a,y2,...)

(1 + x^2/a): ippsAddC(y2, 1, y3,...)

x/(1 + x^2/a): ippsDiv(x,y3, y4.....)

Thanks,

Chao

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I have implemented in this way, is there any way to improve?

ipp32f* x; //this is an array with 1 million elements

ipp32f* x_buffer; //this is a working buffer to store some results, it has the same length of x

ipp32f* x_result; //this is to store the final result

ipp32f kappa; //this is the sqrt of a in the above formular

int destLength; // this is the number of element in the array x

//this is to implement the xe(-x^2/a)

m_status = ippsDivC_32f( x, kappa, x_buffer, destLength );

m_status = ippsSqr_32f_I( x_buffer, destLength );

m_status = ippsMulC_32f_I( -1.0, x_buffer, destLength );

m_status = ippsExp_32f_I( x_buffer, destLength );

m_status = ippsMul_32f( x_buffer, x, x_result, destLength );

//this is to implement x/(1 + x^2/a)

m_status = ippsDivC_32f( x, kappa, x_buffer, destLength );

m_status = ippsSqr_32f_I( x_buffer, destLength );

m_status = ippsAddC_32f_I( 1.0, x_buffer, destLength );

m_status = ippsDiv_32f_I( x_buffer, x, destLength );- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

Is there any function to compute exp( -x^2 ) directly (i.e., Gaussian function)? If the ipp has the function to compute this directly, then the rest would be fast.

Best Regards

Edwin

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi Edwin,

there is no "direct" Gaussian function in IPP. In order to improve your pipeline I would like to suggest you (taking into account your word "large" in the first post) to perform your processing by small pieces of data - that the total length of all your vectors is less than your CPU L0 cache size. And then add one more loop above (from the one piece length to the total vector length). In this case, as load and store are "for free" if are inside L0, you'll have the same performance as if all arithmetic is done in one single function. If you use Intel SVML library for this purpose, you can achieve additional speedup in comparison with IPP as SVML doesn't perform parameters check and can be used with Intel compiler as a native set of intrinsics. (SVML is a part of Intel compiler run-time libraries).

regards, Igor

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi, Igor:

From the manual, i can find the RandGauss, which is essentially compute the exp( -x^2 ) with mean = 0, std = 1 and some random variable x. By comparison, in my case, the x is given instead of randomly generate. So i guess there should be some implementation on method.

Best Regards

Edwin

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi,

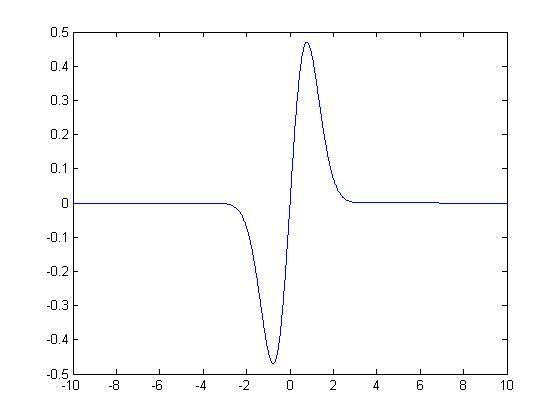

By the way, is there any one know the name xe^(-x^2)? i plot it in Matlab and looks like an impluse function.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

For xe(-x^2/a) , use (-1/a) and reuse buffers:

m_status = ippsSqr_32f( x, x_result, destLength ); m_status = ippsMulC_32f(x_result, -(1.0/a), x_result, destLength ); // constant and reuse of x_result m_status = ippsExp_32f( x_result, x_result, destLength ); m_status = ippsMul_32f( x, x_result, x_result, destLength );

Hopefuly the constant term (-1/a) is accurate enough.

OpenMP can be used to run the code in parallel, but only if the x vector is large enough to justify the overhead.

If x can be minimized to integer (16 or better 8) a lookup might be useful?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page