- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Hi all,

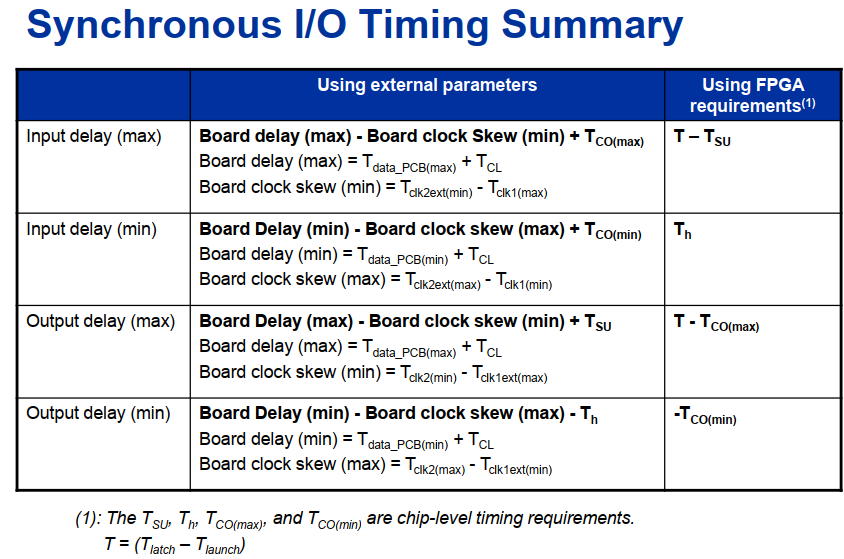

I got a question about timing analysis, for the input delay of source synchronous interface, there are two ways in the manual , one is using external parameter, one is using FPGA requirement.

If use of external input parameters ,my calculation of Max delay is 1 ns, while using FPGA requirement input Max delay is 13 ns, the timing analysis results are completely different when using the above two ways,

My question is, since the two ways to calculate the input delay value is completely different, how does Quartus know which way we use when do timing analysis?

BR,

Eric

Link Copied

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Can someone help me? I've been waiting online, thanks

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

The calculated values between the two methods should not be so wildly different. What information do you have and what are your calculations?

#iwork4intel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

hi sstrell

by using the external parameters, input max delay = Board delay -Board clock Skew + Tco = 1ns,

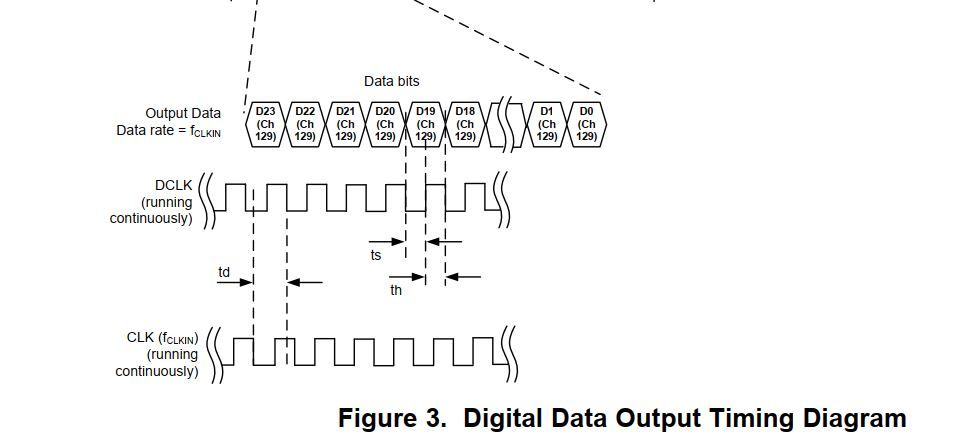

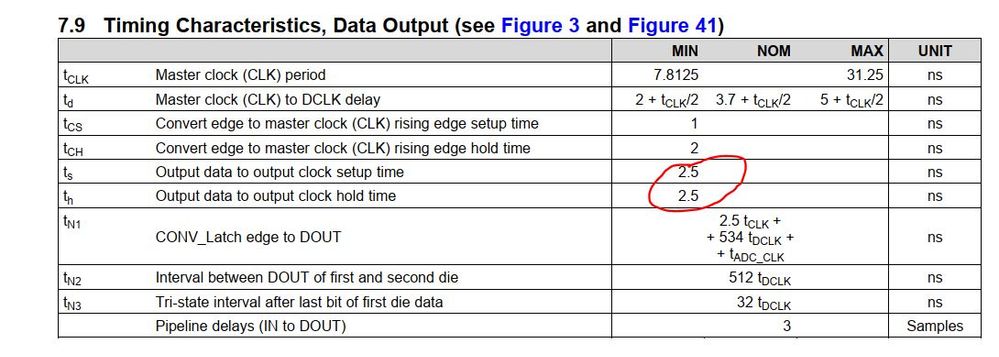

by using FPGA requirement, input max delay = T -Tsu = 15.626 -2.5 = 13.125ns, CLK_Freq=64M, Tsu=2.5ns.

So by using different way, the results were very different, Is my calculation way not correct?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Where is the tco value coming from? I don't see that in the data you've provided. It looks like you are given tS and tH, so 13.125 ns is correct for input delay max. Input delay min would be 2.5 ns.

#iwork4intel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can't find the Tc in the chip manual, can I set it to 0

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

I can't find the Tco in the chip datasheet, so I set it to 0, can I set it to 0?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

No, you can't set it to 0. You've got tS and tH and the correct calculations. You don't need to worry about the tco of the external device. You only need to use one method to constrain or the other. Just use 13.125 and 2.5 as I mentioned.

#iwork4intel

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page