- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

Hello everyone.

I'd like to use the D435i to capture a point cloud/3D Map of an object. The distance between camrea and object would be between 100-1000mm.

What is the best/worst distance between the captured points? What dependencies are relevant in this context?

Best regard,

Imran

Link kopiert

- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

The depth accuracy of the 400 Series cameras is less than one percent of the distance from the object. So if the camera is 1 meter from the object, the expected accuracy is between 2.5 mm to 5 mm.

There are numerous environmental factors that can have an impact on accuracy. Also, in regard to technical factors, the 'RMS error' in the depth readings will increase as the distance from the object increases. It only becomes really noticeable at around the 3 m point though, so you should not experience much accuracy drift if using a distance between 100 and 1000 mm.

Pages 13 and 14 of Intel's excellent illustrated tuning guide provide information and charts about the camera's RMS error factor.

*******

Please also note that the RealSense forum has moved location, so future questions should be posted at the new forum linked to below. Thanks!

https://support.intelrealsense.com/hc/en-us/community/topics

- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

Hi @MartyG,

I work for logistic company, we have a project measure dimensions small objects like USB, lipsticks, ... please see my attachment.

Follow this topic: https://github.com/IntelRealSense/librealsense/tree/master/wrappers/python/examples/box_dimensioner_multicam I have clone and run successfully, but we have a problem, with 2 cameras model D435i I have detected dimensions with not high accuracy. The dimensions which receives from 2 D435i are Length x Width x Height 1.7 x 7.3 x 2.1 (cm) , but real dimension are 1.5 x 7 x 1.5.

the distance from cameras to chessboard is 40 cm.

Kindly give me a advise to improve the result because our boss need more accuracy.

Thank you so much.

- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

Hi @hien_vu Have you changed the size of the chessboard from the 6x9 size suggested in the documentation of the box_dimensioner_multicam example, please?

My understanding is that if you have changed the size of the chessboard then you should update the parameters in the script.

- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

Hi @MartyG,

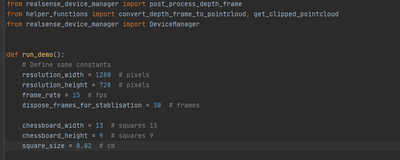

Yes, I have changed it to 13 x 9 and square_size = 0.02 (cm)

I have tested with bigger like box real sense, the result is 10x15x6.6 but real is 9 x 14 x 5.

- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

I note in your script that you are using 1280x720 resolution. The optimal resolution for best depth accuracy with the D435 / D435i models is 848x480

- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

Hi @MartyG,

I have a re-config resolution 848x480, but it is still not more accuracy, you can check this image below:

so if it can not have 100% accuracy, kindly provide me how percent accuracy we can have? so i will report for my manager.

Thanks

- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

It is not possible to achieve 100% accuracy, as the error factor (RMS Error) starts at around 0 at the camera lens and increases linearly over distance. It is expected that accuracy will be less than 1% of the distance of the observed object from the camera. For example, at 1 meter range this may convert to an expected accuracy of 2.5mm to 5mm. There are various factors, including environment and lighting, that can affect accuracy though.

The correct method is to start the program without any objects on the chessboard and allow the cameras to calibrate, and then only place the object on the chessboard when the program asks you to. Are you using the program this way, please?

- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

Hi @MartyG ,

Sure, when I start the program without any object on the chessboard and after all calibrations finish the program shows the command line: place the box in the FOV of the view... I use it right, please?

And one more question, how about small objects such as lipsticks, USB, ...? with normal set up I saw the program can not detect them, so you have any idea for measurement dimensions them?

Thank you

- Als neu kennzeichnen

- Lesezeichen

- Abonnieren

- Stummschalten

- RSS-Feed abonnieren

- Kennzeichnen

- Anstößigen Inhalt melden

The procedure that you describe is correct.

For the next test, please try aligning the box so that its edges line up with the squares instead of having the box point diagonally across the chessboard.

The cameras can measure objects that are the size of a USB stick or lipstick, but the box_dimensioner_multicam program was not designed for objects that small, but instead for the measuring of boxes.

The number of examples available of RealSense programs for taking measurements are very limited. If you are available to use Windows or Android, it may be worth considering a professional commercial RealSense 400 Series compatible software that has measuring functions, such as DotProduct Dot3D Scan:

- RSS-Feed abonnieren

- Thema als neu kennzeichnen

- Thema als gelesen kennzeichnen

- Diesen Thema für aktuellen Benutzer floaten

- Lesezeichen

- Abonnieren

- Drucker-Anzeigeseite